> ## Documentation Index

> Fetch the complete documentation index at: https://docs.langchain.com/llms.txt

> Use this file to discover all available pages before exploring further.

# Set up LLM-as-a-judge online evaluators

[Online evaluations](/langsmith/evaluation-concepts#online-evaluations) provide real-time feedback on your production traces. This is useful to monitor the performance of your application continuously—to identify issues, measure improvements, and ensure consistent quality over time.

**[LLM-as-a-judge](/langsmith/evaluation-concepts#llm-as-judge)** evaluators use an LLM to evaluate traces as a scalable substitute for human-like judgment. This guide covers **run-level** evaluators that evaluate a single run. For evaluating entire conversation threads, see [multi-turn online evaluators](/langsmith/online-evaluations-multi-turn).

When an online evaluator runs on any run within a trace, the trace will be auto-upgraded to [extended data retention](/langsmith/administration-overview#data-retention-auto-upgrades). This upgrade will impact trace pricing, but ensures that traces meeting your evaluation criteria (typically those most valuable for analysis) are preserved for investigation.

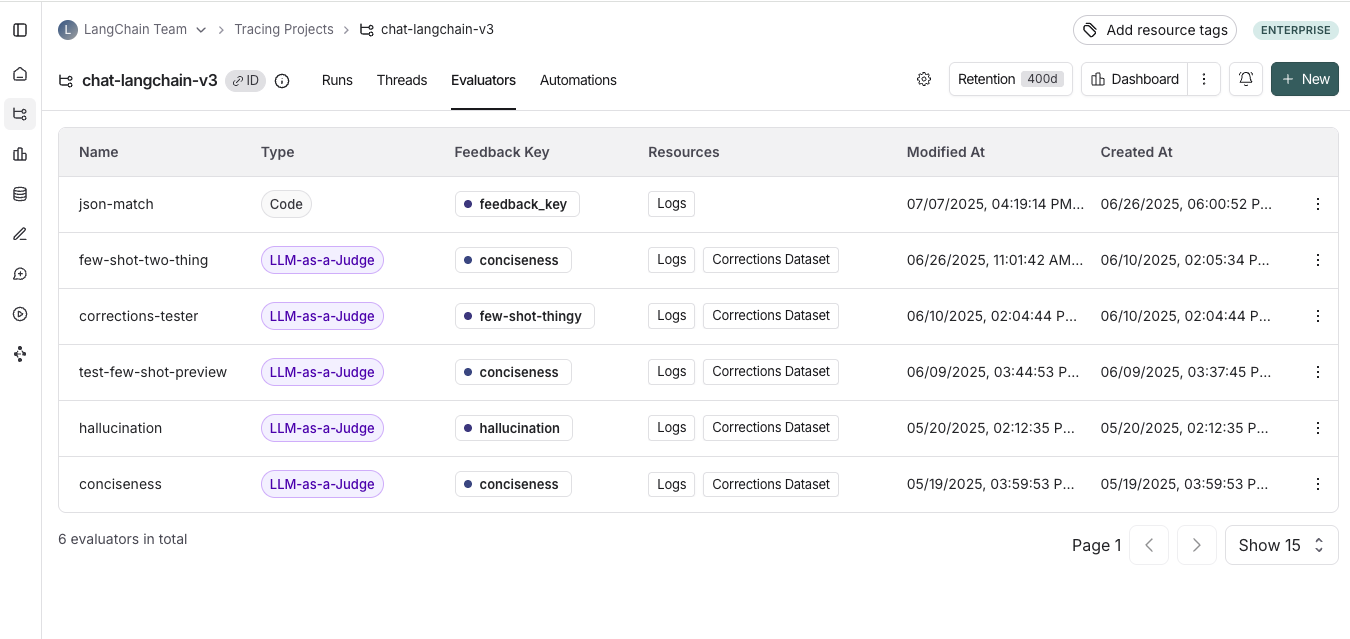

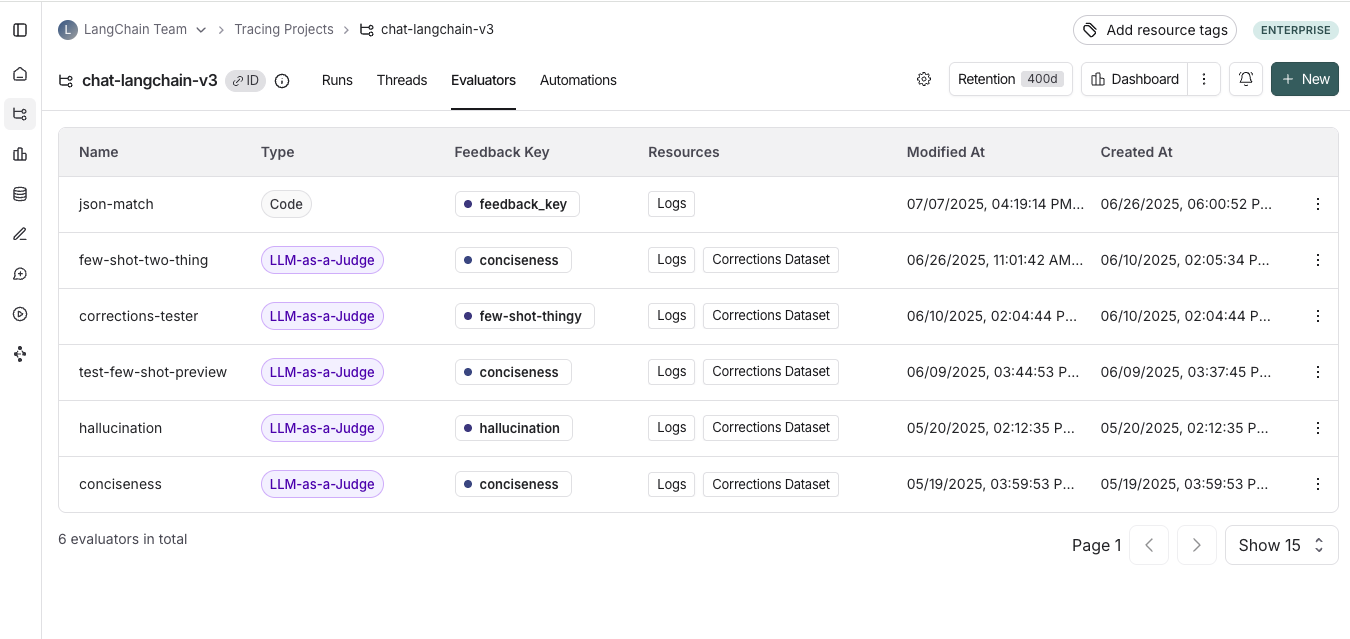

## View online evaluators

In the [LangSmith UI](https://smith.langchain.com?utm_source=docs\&utm_medium=cta\&utm_campaign=langsmith-signup\&utm_content=langsmith-online-evaluations-llm-as-judge), head to the **Tracing Projects** tab and select a tracing project. To view existing online evaluators for that project, click on the **Evaluators** tab.

## Add an online evaluator

1. In the [LangSmith UI](https://smith.langchain.com?utm_source=docs\&utm_medium=cta\&utm_campaign=langsmith-signup\&utm_content=langsmith-online-evaluations-llm-as-judge), navigate to the **Tracing** page and select a tracing project.

2. Click the **Evaluators** tab.

3. Click **+ Evaluator** to open the **Add Evaluator** panel.

4. Choose one of the following:

* **Create from scratch**: Select **LLM-as-a-Judge Evaluator**.

* **Attach an existing evaluator**: Select an evaluator already in your workspace to reuse it.

* **Create from a template**: Start from a ready-made evaluator.

5. Name your evaluator.

## Apply a filter to runs that trigger the evaluator

You can apply a filter to the runs that trigger the evaluator. You may want to apply an evaluator based on:

* Runs where a [user left feedback](/langsmith/attach-user-feedback) indicating the response was unsatisfactory.

* Runs that invoke a specific tool call. See [filtering for tool calls](/langsmith/filter-traces-in-application#example-filtering-for-tool-calls) for more information.

* Runs that match a particular piece of metadata (e.g. if you log traces with a `plan_type` and only want to run evaluations on traces from your enterprise customers). See [adding metadata to your traces](/langsmith/add-metadata-tags) for more information.

[Filters on evaluators](/langsmith/filter-traces-in-application) work the same way as when you're filtering traces in a project.

It's often helpful to inspect runs as you're creating a filter for your evaluator. With the evaluator configuration panel open, you can inspect runs and apply filters to them. Any filters you apply to the runs table will automatically be reflected in filters on your evaluator.

If you also have a webhook automation rule on this project and want the webhook payload to include this evaluator's scores, add a feedback filter to the webhook rule rather than relying on rule ordering. For example, filter on `has(feedback_key, "answer_usefulness")` so the webhook only fires after the score exists. See [Ensuring evaluations complete before the webhook fires](/langsmith/webhooks#ensuring-evaluations-complete-before-the-webhook-fires) for details.

## Configure a sampling rate

Configure a sampling rate to control the percentage of filtered runs that trigger the automation action. For example, to control costs, you may want to set a filter to only apply the evaluator to 10% of traces. In order to do this, you would set the sampling rate to 0.1.

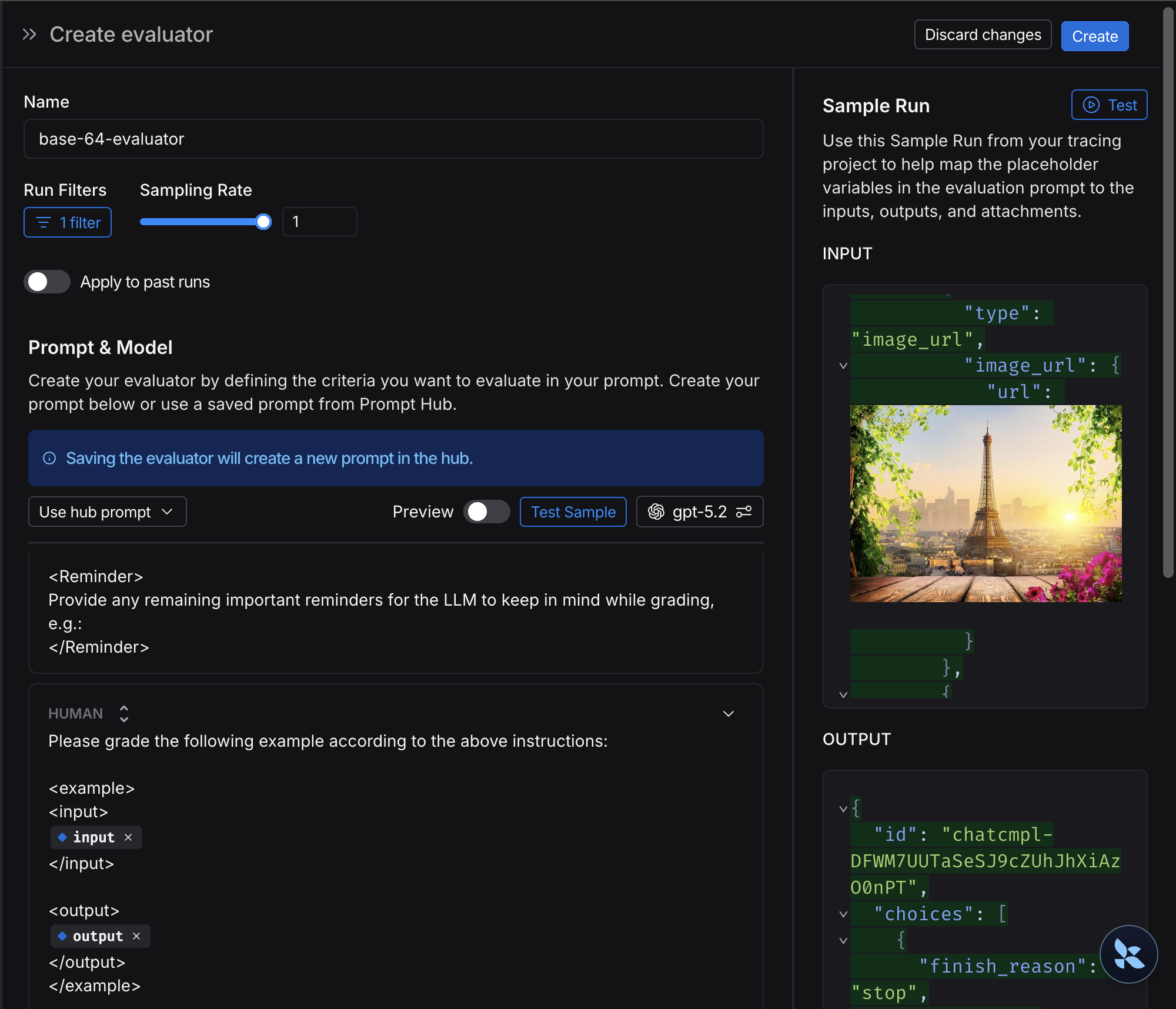

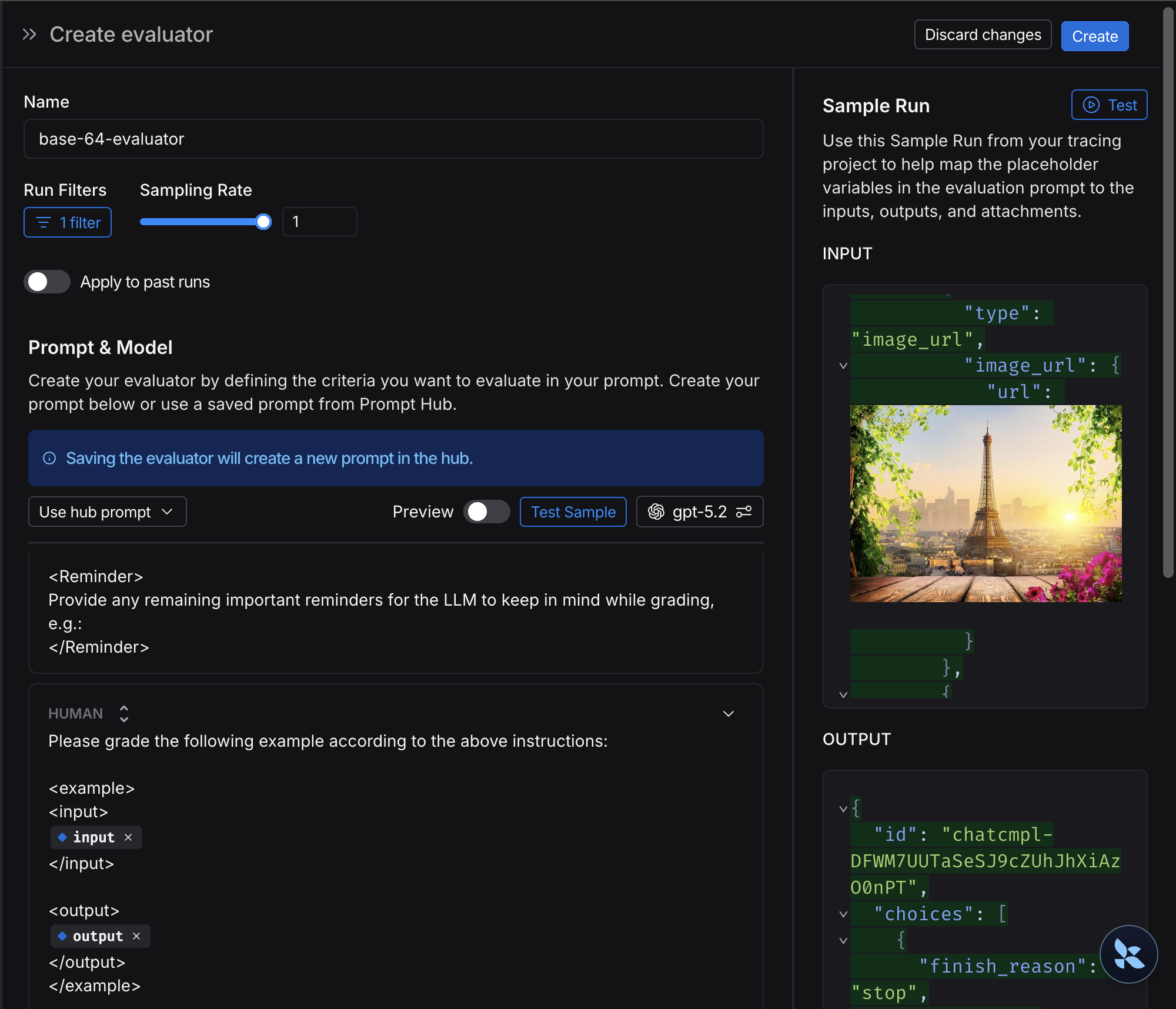

## Apply a rule to past runs

Apply a rule to past runs by toggling the **Apply to past runs** and entering a "Backfill from" date. This is only possible upon rule creation.

The backfill is processed as a background job, so you will not see the results immediately.

In order to track progress of the backfill, you can view logs for your evaluator by heading to the **Evaluators** tab within a tracing project and clicking the Logs button for the evaluator you created. Online evaluator logs are similar to [automation rule logs](/langsmith/rules#view-logs-for-your-automations).

1. Add an evaluator name.

2. Optionally filter runs that you would like to apply your evaluator on or configure a sampling rate.

3. Select **Apply Evaluator**.

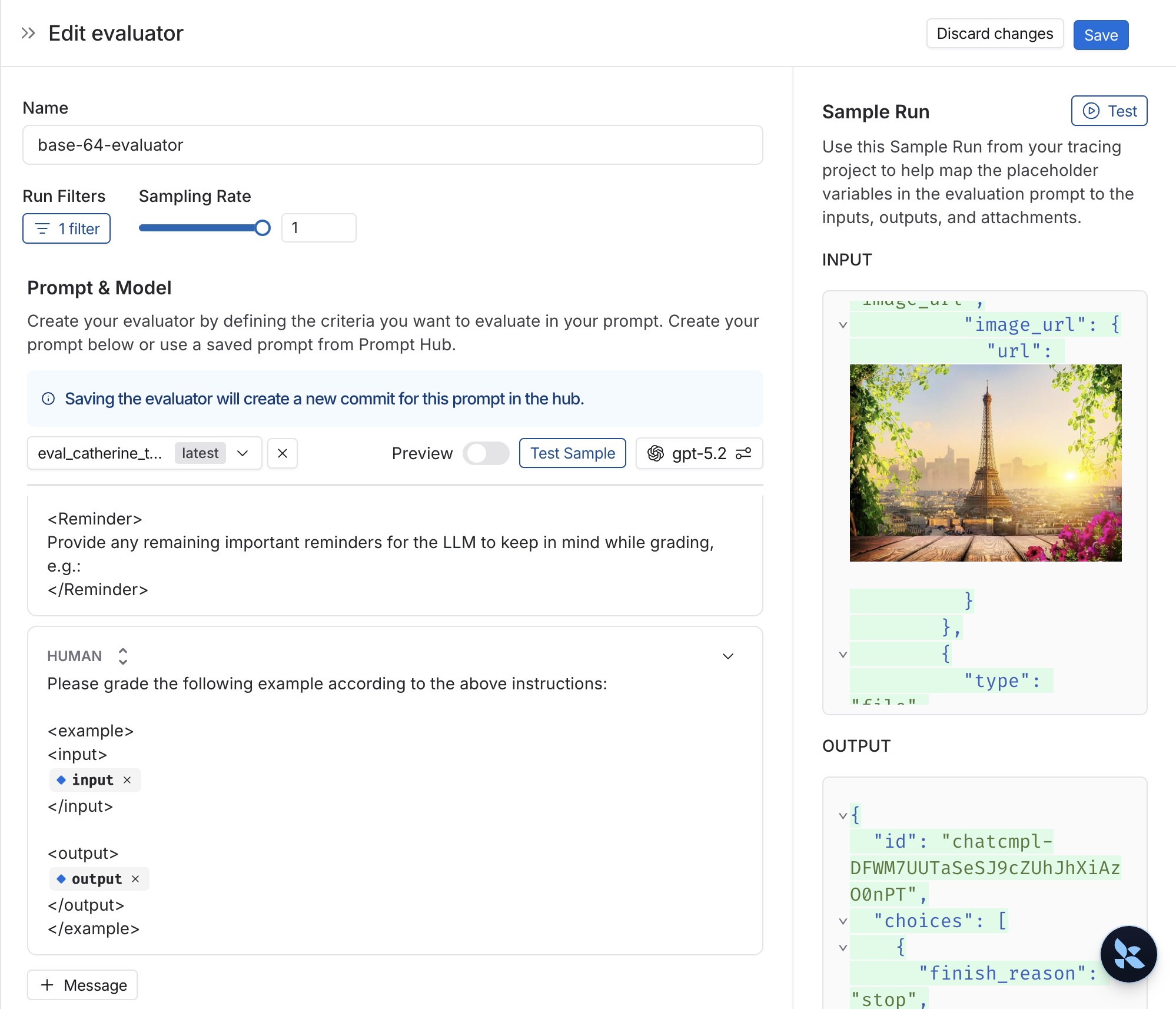

## Configure the LLM-as-a-judge evaluator

View [LLM-as-a-judge evaluators](/langsmith/llm-as-judge#evaluator-templates) for more information.

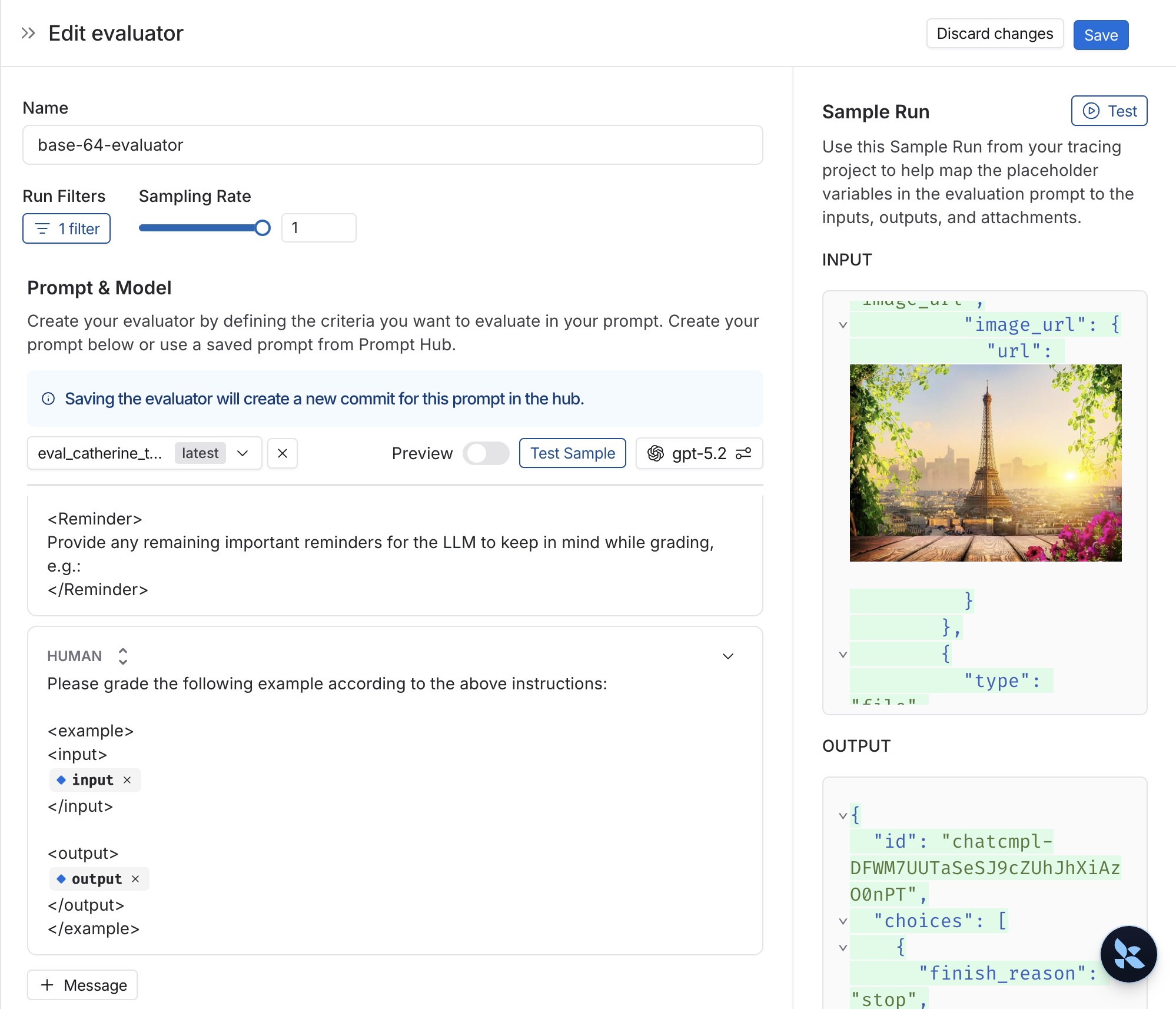

## Map multimodal content to evaluator

If your traces contain multimodal content like images, audio, or documents, you can include this content in your evaluator prompts. There are two approaches:

* **Using base64-encoded content from traces**: If your application logs multimodal content as base64-encoded data in the trace (for example, in the input or output of a run), you can reference this content directly in your evaluator prompt using template variables. The evaluator will extract the base64 data from the trace and pass it to the LLM.

* **Using attachments from traces**: Similar to [offline evaluations with attachments](/langsmith/evaluate-with-attachments), you can use attachments from your traces in online evaluations. Since your traces already include attachments logged via the SDK, you can reference them directly in your evaluator.

## Add an online evaluator

1. In the [LangSmith UI](https://smith.langchain.com?utm_source=docs\&utm_medium=cta\&utm_campaign=langsmith-signup\&utm_content=langsmith-online-evaluations-llm-as-judge), navigate to the **Tracing** page and select a tracing project.

2. Click the **Evaluators** tab.

3. Click **+ Evaluator** to open the **Add Evaluator** panel.

4. Choose one of the following:

* **Create from scratch**: Select **LLM-as-a-Judge Evaluator**.

* **Attach an existing evaluator**: Select an evaluator already in your workspace to reuse it.

* **Create from a template**: Start from a ready-made evaluator.

5. Name your evaluator.

## Apply a filter to runs that trigger the evaluator

You can apply a filter to the runs that trigger the evaluator. You may want to apply an evaluator based on:

* Runs where a [user left feedback](/langsmith/attach-user-feedback) indicating the response was unsatisfactory.

* Runs that invoke a specific tool call. See [filtering for tool calls](/langsmith/filter-traces-in-application#example-filtering-for-tool-calls) for more information.

* Runs that match a particular piece of metadata (e.g. if you log traces with a `plan_type` and only want to run evaluations on traces from your enterprise customers). See [adding metadata to your traces](/langsmith/add-metadata-tags) for more information.

[Filters on evaluators](/langsmith/filter-traces-in-application) work the same way as when you're filtering traces in a project.

It's often helpful to inspect runs as you're creating a filter for your evaluator. With the evaluator configuration panel open, you can inspect runs and apply filters to them. Any filters you apply to the runs table will automatically be reflected in filters on your evaluator.

If you also have a webhook automation rule on this project and want the webhook payload to include this evaluator's scores, add a feedback filter to the webhook rule rather than relying on rule ordering. For example, filter on `has(feedback_key, "answer_usefulness")` so the webhook only fires after the score exists. See [Ensuring evaluations complete before the webhook fires](/langsmith/webhooks#ensuring-evaluations-complete-before-the-webhook-fires) for details.

## Configure a sampling rate

Configure a sampling rate to control the percentage of filtered runs that trigger the automation action. For example, to control costs, you may want to set a filter to only apply the evaluator to 10% of traces. In order to do this, you would set the sampling rate to 0.1.

## Apply a rule to past runs

Apply a rule to past runs by toggling the **Apply to past runs** and entering a "Backfill from" date. This is only possible upon rule creation.

The backfill is processed as a background job, so you will not see the results immediately.

In order to track progress of the backfill, you can view logs for your evaluator by heading to the **Evaluators** tab within a tracing project and clicking the Logs button for the evaluator you created. Online evaluator logs are similar to [automation rule logs](/langsmith/rules#view-logs-for-your-automations).

1. Add an evaluator name.

2. Optionally filter runs that you would like to apply your evaluator on or configure a sampling rate.

3. Select **Apply Evaluator**.

## Configure the LLM-as-a-judge evaluator

View [LLM-as-a-judge evaluators](/langsmith/llm-as-judge#evaluator-templates) for more information.

## Map multimodal content to evaluator

If your traces contain multimodal content like images, audio, or documents, you can include this content in your evaluator prompts. There are two approaches:

* **Using base64-encoded content from traces**: If your application logs multimodal content as base64-encoded data in the trace (for example, in the input or output of a run), you can reference this content directly in your evaluator prompt using template variables. The evaluator will extract the base64 data from the trace and pass it to the LLM.

* **Using attachments from traces**: Similar to [offline evaluations with attachments](/langsmith/evaluate-with-attachments), you can use attachments from your traces in online evaluations. Since your traces already include attachments logged via the SDK, you can reference them directly in your evaluator.

1. Select **+ Evaluator** from the dataset page.

2. In the **Template variables** editor, add a variable for the attachment(s) to include:

* If you want to include a specifc attachment, you can use the suggested variable name, such as `{{attachment.file_name}}`, this will map the file with `file_name` in the attachment list to pass it to the evaluator.

* If you want to include all attachments, use the `{{attachments}`}\` variable.

The evaluator can then access these attachments when evaluating the trace. This is useful for evaluators that need to:

* Verify if an image description matches the actual image in the trace.

* Check if a transcription accurately reflects the audio input.

* Validate if extracted text from a document is correct.

***

1. Select **+ Evaluator** from the dataset page.

2. In the **Template variables** editor, add a variable for the attachment(s) to include:

* If you want to include a specifc attachment, you can use the suggested variable name, such as `{{attachment.file_name}}`, this will map the file with `file_name` in the attachment list to pass it to the evaluator.

* If you want to include all attachments, use the `{{attachments}`}\` variable.

The evaluator can then access these attachments when evaluating the trace. This is useful for evaluators that need to:

* Verify if an image description matches the actual image in the trace.

* Check if a transcription accurately reflects the audio input.

* Validate if extracted text from a document is correct.

***

[Connect these docs](/use-these-docs) to Claude, VSCode, and more via MCP for real-time answers.

[Edit this page on GitHub](https://github.com/langchain-ai/docs/edit/main/src/langsmith/online-evaluations-llm-as-judge.mdx) or [file an issue](https://github.com/langchain-ai/docs/issues/new/choose).

## Add an online evaluator

1. In the [LangSmith UI](https://smith.langchain.com?utm_source=docs\&utm_medium=cta\&utm_campaign=langsmith-signup\&utm_content=langsmith-online-evaluations-llm-as-judge), navigate to the **Tracing** page and select a tracing project.

2. Click the **Evaluators** tab.

3. Click **+ Evaluator** to open the **Add Evaluator** panel.

4. Choose one of the following:

* **Create from scratch**: Select **LLM-as-a-Judge Evaluator**.

* **Attach an existing evaluator**: Select an evaluator already in your workspace to reuse it.

* **Create from a template**: Start from a ready-made evaluator.

5. Name your evaluator.

## Apply a filter to runs that trigger the evaluator

You can apply a filter to the runs that trigger the evaluator. You may want to apply an evaluator based on:

* Runs where a [user left feedback](/langsmith/attach-user-feedback) indicating the response was unsatisfactory.

* Runs that invoke a specific tool call. See [filtering for tool calls](/langsmith/filter-traces-in-application#example-filtering-for-tool-calls) for more information.

* Runs that match a particular piece of metadata (e.g. if you log traces with a `plan_type` and only want to run evaluations on traces from your enterprise customers). See [adding metadata to your traces](/langsmith/add-metadata-tags) for more information.

[Filters on evaluators](/langsmith/filter-traces-in-application) work the same way as when you're filtering traces in a project.

## Add an online evaluator

1. In the [LangSmith UI](https://smith.langchain.com?utm_source=docs\&utm_medium=cta\&utm_campaign=langsmith-signup\&utm_content=langsmith-online-evaluations-llm-as-judge), navigate to the **Tracing** page and select a tracing project.

2. Click the **Evaluators** tab.

3. Click **+ Evaluator** to open the **Add Evaluator** panel.

4. Choose one of the following:

* **Create from scratch**: Select **LLM-as-a-Judge Evaluator**.

* **Attach an existing evaluator**: Select an evaluator already in your workspace to reuse it.

* **Create from a template**: Start from a ready-made evaluator.

5. Name your evaluator.

## Apply a filter to runs that trigger the evaluator

You can apply a filter to the runs that trigger the evaluator. You may want to apply an evaluator based on:

* Runs where a [user left feedback](/langsmith/attach-user-feedback) indicating the response was unsatisfactory.

* Runs that invoke a specific tool call. See [filtering for tool calls](/langsmith/filter-traces-in-application#example-filtering-for-tool-calls) for more information.

* Runs that match a particular piece of metadata (e.g. if you log traces with a `plan_type` and only want to run evaluations on traces from your enterprise customers). See [adding metadata to your traces](/langsmith/add-metadata-tags) for more information.

[Filters on evaluators](/langsmith/filter-traces-in-application) work the same way as when you're filtering traces in a project.

1. Select **+ Evaluator** from the dataset page.

2. In the **Template variables** editor, add a variable for the attachment(s) to include:

* If you want to include a specifc attachment, you can use the suggested variable name, such as `{{attachment.file_name}}`, this will map the file with `file_name` in the attachment list to pass it to the evaluator.

* If you want to include all attachments, use the `{{attachments}`}\` variable.

The evaluator can then access these attachments when evaluating the trace. This is useful for evaluators that need to:

* Verify if an image description matches the actual image in the trace.

* Check if a transcription accurately reflects the audio input.

* Validate if extracted text from a document is correct.

***

1. Select **+ Evaluator** from the dataset page.

2. In the **Template variables** editor, add a variable for the attachment(s) to include:

* If you want to include a specifc attachment, you can use the suggested variable name, such as `{{attachment.file_name}}`, this will map the file with `file_name` in the attachment list to pass it to the evaluator.

* If you want to include all attachments, use the `{{attachments}`}\` variable.

The evaluator can then access these attachments when evaluating the trace. This is useful for evaluators that need to:

* Verify if an image description matches the actual image in the trace.

* Check if a transcription accurately reflects the audio input.

* Validate if extracted text from a document is correct.

***