> ## Documentation Index

> Fetch the complete documentation index at: https://docs.langchain.com/llms.txt

> Use this file to discover all available pages before exploring further.

# Use the graph API

This guide demonstrates the basics of LangGraph's Graph API. It walks through [state](#define-and-update-state), as well as composing common graph structures such as [sequences](#create-a-sequence-of-steps), [branches](#create-branches), and [loops](#create-and-control-loops). It also covers LangGraph's control features, including the [Send API](#map-reduce-and-the-send-api) for map-reduce workflows and the [Command API](#combine-control-flow-and-state-updates-with-command) for combining state updates with "hops" across nodes.

## Setup

Install `langgraph`:

```bash pip theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

pip install -U langgraph

```

```bash uv theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

uv add langgraph

```

**Set up LangSmith for better debugging**

Sign up for [LangSmith](https://smith.langchain.com?utm_source=docs\&utm_medium=cta\&utm_campaign=langsmith-signup\&utm_content=oss-langgraph-use-graph-api) to quickly spot issues and improve the performance of your LangGraph projects. LangSmith lets you use trace data to debug, test, and monitor your LLM apps built with LangGraph—read more about how to get started in the [docs](/langsmith/observability).

## Define and update state

Here we show how to define and update [state](/oss/python/langgraph/graph-api#state) in LangGraph. We will demonstrate:

1. How to use state to define a graph's [schema](/oss/python/langgraph/graph-api#schema)

2. How to use [reducers](/oss/python/langgraph/graph-api#reducers) to control how state updates are processed.

### Define state

[State](/oss/python/langgraph/graph-api#state) in LangGraph can be a `TypedDict`, `Pydantic` model, or dataclass. Below we will use `TypedDict`. See [Use Pydantic models for graph state](#use-pydantic-models-for-graph-state) for detail on using Pydantic.

By default, graphs will have the same input and output schema, and the state determines that schema. See [Define input and output schemas](#define-input-and-output-schemas) for how to define distinct input and output schemas.

Let's consider a simple example using [messages](/oss/python/langgraph/graph-api#messagesstate). This represents a versatile formulation of state for many LLM applications. See our [concepts page](/oss/python/langgraph/graph-api#working-with-messages-in-graph-state) for more detail.

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

from langchain.messages import AnyMessage

from typing_extensions import TypedDict

class State(TypedDict):

messages: list[AnyMessage]

extra_field: int

```

This state tracks a list of [message](https://python.langchain.com/docs/concepts/messages/) objects, as well as an extra integer field.

### Update state

Let's build an example graph with a single node. Our [node](/oss/python/langgraph/graph-api#nodes) is just a Python function that reads our graph's state and makes updates to it. The first argument to this function will always be the state:

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

from langchain.messages import AIMessage

def node(state: State):

messages = state["messages"]

new_message = AIMessage("Hello!")

return {"messages": messages + [new_message], "extra_field": 10}

```

This node simply appends a message to our message list, and populates an extra field.

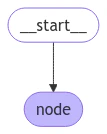

Nodes should return updates to the state directly, instead of mutating the state.

Let's next define a simple graph containing this node. We use [`StateGraph`](/oss/python/langgraph/graph-api#stategraph) to define a graph that operates on this state. We then use [`add_node`](/oss/python/langgraph/graph-api#nodes) populate our graph.

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

from langgraph.graph import StateGraph

builder = StateGraph(State)

builder.add_node(node)

builder.set_entry_point("node")

graph = builder.compile()

```

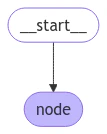

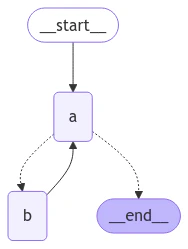

LangGraph provides built-in utilities for visualizing your graph. Let's inspect our graph. See [Visualize your graph](#visualize-your-graph) for detail on visualization.

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

from IPython.display import Image, display

display(Image(graph.get_graph().draw_mermaid_png()))

```

In this case, our graph just executes a single node. Let's proceed with a simple invocation:

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

from langchain.messages import HumanMessage

result = graph.invoke({"messages": [HumanMessage("Hi")]})

result

```

```

{'messages': [HumanMessage(content='Hi'), AIMessage(content='Hello!')], 'extra_field': 10}

```

Note that:

* We kicked off invocation by updating a single key of the state.

* We receive the entire state in the invocation result.

For convenience, we frequently inspect the content of [message objects](https://python.langchain.com/docs/concepts/messages/) via pretty-print:

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

for message in result["messages"]:

message.pretty_print()

```

```

================================ Human Message ================================

Hi

================================== Ai Message ==================================

Hello!

```

### Process state updates with reducers

Each key in the state can have its own independent [reducer](/oss/python/langgraph/graph-api#reducers) function, which controls how updates from nodes are applied. If no reducer function is explicitly specified then it is assumed that all updates to the key should override it.

For `TypedDict` state schemas, we can define reducers by annotating the corresponding field of the state with a reducer function.

In the earlier example, our node updated the `"messages"` key in the state by appending a message to it. Below, we add a reducer to this key, such that updates are automatically appended:

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

from typing_extensions import Annotated

def add(left, right):

"""Can also import `add` from the `operator` built-in."""

return left + right

class State(TypedDict):

messages: Annotated[list[AnyMessage], add] # [!code highlight]

extra_field: int

```

Now our node can be simplified:

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

def node(state: State):

new_message = AIMessage("Hello!")

return {"messages": [new_message], "extra_field": 10} # [!code highlight]

```

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

from langgraph.graph import START

graph = StateGraph(State).add_node(node).add_edge(START, "node").compile()

result = graph.invoke({"messages": [HumanMessage("Hi")]})

for message in result["messages"]:

message.pretty_print()

```

```

================================ Human Message ================================

Hi

================================== Ai Message ==================================

Hello!

```

#### MessagesState

In practice, there are additional considerations for updating lists of messages:

* We may wish to update an existing message in the state.

* We may want to accept short-hands for [message formats](/oss/python/langgraph/graph-api#using-messages-in-your-graph), such as [OpenAI format](https://python.langchain.com/docs/concepts/messages/#openai-format).

LangGraph includes a built-in reducer [`add_messages`](https://reference.langchain.com/python/langgraph/graph/message/add_messages) that handles these considerations:

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

from langgraph.graph.message import add_messages

class State(TypedDict):

messages: Annotated[list[AnyMessage], add_messages] # [!code highlight]

extra_field: int

def node(state: State):

new_message = AIMessage("Hello!")

return {"messages": [new_message], "extra_field": 10}

graph = StateGraph(State).add_node(node).set_entry_point("node").compile()

```

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

input_message = {"role": "user", "content": "Hi"} # [!code highlight]

result = graph.invoke({"messages": [input_message]})

for message in result["messages"]:

message.pretty_print()

```

```

================================ Human Message ================================

Hi

================================== Ai Message ==================================

Hello!

```

This is a versatile representation of state for applications involving [chat models](https://python.langchain.com/docs/concepts/chat_models/). LangGraph includes a prebuilt `MessagesState` for convenience, so that we can have:

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

from langgraph.graph import MessagesState

class State(MessagesState):

extra_field: int

```

### Bypass reducers with `Overwrite`

In some cases, you may want to bypass a reducer and directly overwrite a state value. LangGraph provides the [`Overwrite`](https://reference.langchain.com/python/langgraph/types/) type for this purpose. When a node returns a value wrapped with `Overwrite`, the reducer is bypassed and the channel is set directly to that value.

This is useful when you want to reset or replace accumulated state rather than merge it with existing values.

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

from langgraph.graph import StateGraph, START, END

from langgraph.types import Overwrite

from typing_extensions import Annotated, TypedDict

import operator

class State(TypedDict):

messages: Annotated[list, operator.add]

def add_message(state: State):

return {"messages": ["first message"]}

def replace_messages(state: State):

# Bypass the reducer and replace the entire messages list

return {"messages": Overwrite(["replacement message"])}

builder = StateGraph(State)

builder.add_node("add_message", add_message)

builder.add_node("replace_messages", replace_messages)

builder.add_edge(START, "add_message")

builder.add_edge("add_message", "replace_messages")

builder.add_edge("replace_messages", END)

graph = builder.compile()

result = graph.invoke({"messages": ["initial"]})

print(result["messages"])

```

```

['replacement message']

```

You can also use JSON format with the special key `"__overwrite__"`:

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

def replace_messages(state: State):

return {"messages": {"__overwrite__": ["replacement message"]}}

```

When nodes execute in parallel, only one node can use `Overwrite` on the same state key in a given super-step. If multiple nodes attempt to overwrite the same key in the same super-step, an `InvalidUpdateError` will be raised.

### Define input and output schemas

By default, `StateGraph` operates with a single schema, and all nodes are expected to communicate using that schema. However, it's also possible to define distinct input and output schemas for a graph.

When distinct schemas are specified, an internal schema will still be used for communication between nodes. The input schema ensures that the provided input matches the expected structure, while the output schema filters the internal data to return only the relevant information according to the defined output schema.

Below, we'll see how to define distinct input and output schema.

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

from langgraph.graph import StateGraph, START, END

from typing_extensions import TypedDict

# Define the schema for the input

class InputState(TypedDict):

question: str

# Define the schema for the output

class OutputState(TypedDict):

answer: str

# Define the overall schema, combining both input and output

class OverallState(InputState, OutputState):

pass

# Define the node that processes the input and generates an answer

def answer_node(state: InputState):

# Example answer and an extra key

return {"answer": "bye", "question": state["question"]}

# Build the graph with input and output schemas specified

builder = StateGraph(OverallState, input_schema=InputState, output_schema=OutputState)

builder.add_node(answer_node) # Add the answer node

builder.add_edge(START, "answer_node") # Define the starting edge

builder.add_edge("answer_node", END) # Define the ending edge

graph = builder.compile() # Compile the graph

# Invoke the graph with an input and print the result

print(graph.invoke({"question": "hi"}))

```

```

{'answer': 'bye'}

```

Notice that the output of invoke only includes the output schema.

### Pass private state between nodes

In some cases, you may want nodes to exchange information that is crucial for intermediate logic but doesn't need to be part of the main schema of the graph. This private data is not relevant to the overall input/output of the graph and should only be shared between certain nodes.

Below, we'll create an example sequential graph consisting of three nodes (node\_1, node\_2 and node\_3), where private data is passed between the first two steps (node\_1 and node\_2), while the third step (node\_3) only has access to the public overall state.

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

from langgraph.graph import StateGraph, START, END

from typing_extensions import TypedDict

# The overall state of the graph (this is the public state shared across nodes)

class OverallState(TypedDict):

a: str

# Output from node_1 contains private data that is not part of the overall state

class Node1Output(TypedDict):

private_data: str

# The private data is only shared between node_1 and node_2

def node_1(state: OverallState) -> Node1Output:

output = {"private_data": "set by node_1"}

print(f"Entered node `node_1`:\n\tInput: {state}.\n\tReturned: {output}")

return output

# Node 2 input only requests the private data available after node_1

class Node2Input(TypedDict):

private_data: str

def node_2(state: Node2Input) -> OverallState:

output = {"a": "set by node_2"}

print(f"Entered node `node_2`:\n\tInput: {state}.\n\tReturned: {output}")

return output

# Node 3 only has access to the overall state (no access to private data from node_1)

def node_3(state: OverallState) -> OverallState:

output = {"a": "set by node_3"}

print(f"Entered node `node_3`:\n\tInput: {state}.\n\tReturned: {output}")

return output

# Connect nodes in a sequence

# node_2 accepts private data from node_1, whereas

# node_3 does not see the private data.

builder = StateGraph(OverallState).add_sequence([node_1, node_2, node_3])

builder.add_edge(START, "node_1")

graph = builder.compile()

# Invoke the graph with the initial state

response = graph.invoke(

{

"a": "set at start",

}

)

print()

print(f"Output of graph invocation: {response}")

```

```

Entered node `node_1`:

Input: {'a': 'set at start'}.

Returned: {'private_data': 'set by node_1'}

Entered node `node_2`:

Input: {'private_data': 'set by node_1'}.

Returned: {'a': 'set by node_2'}

Entered node `node_3`:

Input: {'a': 'set by node_2'}.

Returned: {'a': 'set by node_3'}

Output of graph invocation: {'a': 'set by node_3'}

```

### Use pydantic models for graph state

A [StateGraph](https://langchain-ai.github.io/langgraph/reference/graphs.md#langgraph.graph.StateGraph) accepts a [`state_schema`](https://reference.langchain.com/python/langchain/middleware/#langchain.agents.middleware.AgentMiddleware.state_schema) argument on initialization that specifies the "shape" of the state that the nodes in the graph can access and update.

In our examples, we typically use a python-native `TypedDict` or [`dataclass`](https://docs.python.org/3/library/dataclasses.html) for `state_schema`, but [`state_schema`](https://reference.langchain.com/python/langchain/middleware/#langchain.agents.middleware.AgentMiddleware.state_schema) can be any [type](https://docs.python.org/3/library/stdtypes.html#type-objects).

Here, we'll see how a [Pydantic BaseModel](https://docs.pydantic.dev/latest/api/base_model/) can be used for [`state_schema`](https://reference.langchain.com/python/langchain/middleware/#langchain.agents.middleware.AgentMiddleware.state_schema) to add run-time validation on **inputs**.

**Known Limitations**

* Currently, the output of the graph will **NOT** be an instance of a pydantic model.

* Run-time validation only occurs on inputs to the first node in the graph, not on subsequent nodes or outputs.

* The validation error trace from pydantic does not show which node the error arises in.

* Pydantic's recursive validation can be slow. For performance-sensitive applications, you may want to consider using a `dataclass` instead.

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

from langgraph.graph import StateGraph, START, END

from typing_extensions import TypedDict

from pydantic import BaseModel

# The overall state of the graph (this is the public state shared across nodes)

class OverallState(BaseModel):

a: str

def node(state: OverallState):

return {"a": "goodbye"}

# Build the state graph

builder = StateGraph(OverallState)

builder.add_node(node) # node_1 is the first node

builder.add_edge(START, "node") # Start the graph with node_1

builder.add_edge("node", END) # End the graph after node_1

graph = builder.compile()

# Test the graph with a valid input

graph.invoke({"a": "hello"})

```

Invoke the graph with an **invalid** input

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

try:

graph.invoke({"a": 123}) # Should be a string

except Exception as e:

print("An exception was raised because `a` is an integer rather than a string.")

print(e)

```

```

An exception was raised because `a` is an integer rather than a string.

1 validation error for OverallState

a

Input should be a valid string [type=string_type, input_value=123, input_type=int]

For further information visit https://errors.pydantic.dev/2.9/v/string_type

```

See below for additional features of Pydantic model state:

When using Pydantic models as state schemas, it's important to understand how serialization works, especially when:

* Passing Pydantic objects as inputs

* Receiving outputs from the graph

* Working with nested Pydantic models

Let's see these behaviors in action.

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

from langgraph.graph import StateGraph, START, END

from pydantic import BaseModel

class NestedModel(BaseModel):

value: str

class ComplexState(BaseModel):

text: str

count: int

nested: NestedModel

def process_node(state: ComplexState):

# Node receives a validated Pydantic object

print(f"Input state type: {type(state)}")

print(f"Nested type: {type(state.nested)}")

# Return a dictionary update

return {"text": state.text + " processed", "count": state.count + 1}

# Build the graph

builder = StateGraph(ComplexState)

builder.add_node("process", process_node)

builder.add_edge(START, "process")

builder.add_edge("process", END)

graph = builder.compile()

# Create a Pydantic instance for input

input_state = ComplexState(text="hello", count=0, nested=NestedModel(value="test"))

print(f"Input object type: {type(input_state)}")

# Invoke graph with a Pydantic instance

result = graph.invoke(input_state)

print(f"Output type: {type(result)}")

print(f"Output content: {result}")

# Convert back to Pydantic model if needed

output_model = ComplexState(**result)

print(f"Converted back to Pydantic: {type(output_model)}")

```

Pydantic performs runtime type coercion for certain data types. This can be helpful but also lead to unexpected behavior if you're not aware of it.

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

from langgraph.graph import StateGraph, START, END

from pydantic import BaseModel

class CoercionExample(BaseModel):

# Pydantic will coerce string numbers to integers

number: int

# Pydantic will parse string booleans to bool

flag: bool

def inspect_node(state: CoercionExample):

print(f"number: {state.number} (type: {type(state.number)})")

print(f"flag: {state.flag} (type: {type(state.flag)})")

return {}

builder = StateGraph(CoercionExample)

builder.add_node("inspect", inspect_node)

builder.add_edge(START, "inspect")

builder.add_edge("inspect", END)

graph = builder.compile()

# Demonstrate coercion with string inputs that will be converted

result = graph.invoke({"number": "42", "flag": "true"})

# This would fail with a validation error

try:

graph.invoke({"number": "not-a-number", "flag": "true"})

except Exception as e:

print(f"\nExpected validation error: {e}")

```

When working with LangChain message types in your state schema, there are important considerations for serialization. You should use `AnyMessage` (rather than `BaseMessage`) for proper serialization/deserialization when using message objects over the wire.

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

from langgraph.graph import StateGraph, START, END

from pydantic import BaseModel

from langchain.messages import HumanMessage, AIMessage, AnyMessage

from typing import List

class ChatState(BaseModel):

messages: List[AnyMessage]

context: str

def add_message(state: ChatState):

return {"messages": state.messages + [AIMessage(content="Hello there!")]}

builder = StateGraph(ChatState)

builder.add_node("add_message", add_message)

builder.add_edge(START, "add_message")

builder.add_edge("add_message", END)

graph = builder.compile()

# Create input with a message

initial_state = ChatState(

messages=[HumanMessage(content="Hi")], context="Customer support chat"

)

result = graph.invoke(initial_state)

print(f"Output: {result}")

# Convert back to Pydantic model to see message types

output_model = ChatState(**result)

for i, msg in enumerate(output_model.messages):

print(f"Message {i}: {type(msg).__name__} - {msg.content}")

```

## Add runtime configuration

Sometimes you want to be able to configure your graph when calling it. For example, you might want to be able to specify what LLM or system prompt to use at runtime, *without polluting the graph state with these parameters*.

To add runtime configuration:

1. Specify a schema for your configuration

2. Add the configuration to the function signature for nodes or conditional edges

3. Pass the configuration into the graph.

See below for a simple example:

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

from langgraph.graph import END, StateGraph, START

from langgraph.runtime import Runtime

from typing_extensions import TypedDict

# 1. Specify config schema

class ContextSchema(TypedDict):

my_runtime_value: str

# 2. Define a graph that accesses the config in a node

class State(TypedDict):

my_state_value: str

def node(state: State, runtime: Runtime[ContextSchema]): # [!code highlight]

if runtime.context["my_runtime_value"] == "a": # [!code highlight]

return {"my_state_value": 1}

elif runtime.context["my_runtime_value"] == "b": # [!code highlight]

return {"my_state_value": 2}

else:

raise ValueError("Unknown values.")

builder = StateGraph(State, context_schema=ContextSchema) # [!code highlight]

builder.add_node(node)

builder.add_edge(START, "node")

builder.add_edge("node", END)

graph = builder.compile()

# 3. Pass in configuration at runtime:

print(graph.invoke({}, context={"my_runtime_value": "a"})) # [!code highlight]

print(graph.invoke({}, context={"my_runtime_value": "b"})) # [!code highlight]

```

```

{'my_state_value': 1}

{'my_state_value': 2}

```

Below we demonstrate a practical example in which we configure what LLM to use at runtime. We will use both OpenAI and Anthropic models.

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

from dataclasses import dataclass

from langchain.chat_models import init_chat_model

from langgraph.graph import MessagesState, END, StateGraph, START

from langgraph.runtime import Runtime

from typing_extensions import TypedDict

@dataclass

class ContextSchema:

model_provider: str = "anthropic"

MODELS = {

"anthropic": init_chat_model("claude-haiku-4-5-20251001"),

"openai": init_chat_model("gpt-5.4-mini"),

}

def call_model(state: MessagesState, runtime: Runtime[ContextSchema]):

model = MODELS[runtime.context.model_provider]

response = model.invoke(state["messages"])

return {"messages": [response]}

builder = StateGraph(MessagesState, context_schema=ContextSchema)

builder.add_node("model", call_model)

builder.add_edge(START, "model")

builder.add_edge("model", END)

graph = builder.compile()

# Usage

input_message = {"role": "user", "content": "hi"}

# With no configuration, uses default (Anthropic)

response_1 = graph.invoke({"messages": [input_message]}, context=ContextSchema())["messages"][-1]

# Or, can set OpenAI

response_2 = graph.invoke({"messages": [input_message]}, context={"model_provider": "openai"})["messages"][-1]

print(response_1.response_metadata["model_name"])

print(response_2.response_metadata["model_name"])

```

```

claude-haiku-4-5-20251001

gpt-5.4-mini

```

Below we demonstrate a practical example in which we configure two parameters: the LLM and system message to use at runtime.

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

from dataclasses import dataclass

from langchain.chat_models import init_chat_model

from langchain.messages import SystemMessage

from langgraph.graph import END, MessagesState, StateGraph, START

from langgraph.runtime import Runtime

from typing_extensions import TypedDict

@dataclass

class ContextSchema:

model_provider: str = "anthropic"

system_message: str | None = None

MODELS = {

"anthropic": init_chat_model("claude-haiku-4-5-20251001"),

"openai": init_chat_model("gpt-5.4-mini"),

}

def call_model(state: MessagesState, runtime: Runtime[ContextSchema]):

model = MODELS[runtime.context.model_provider]

messages = state["messages"]

if (system_message := runtime.context.system_message):

messages = [SystemMessage(system_message)] + messages

response = model.invoke(messages)

return {"messages": [response]}

builder = StateGraph(MessagesState, context_schema=ContextSchema)

builder.add_node("model", call_model)

builder.add_edge(START, "model")

builder.add_edge("model", END)

graph = builder.compile()

# Usage

input_message = {"role": "user", "content": "hi"}

response = graph.invoke({"messages": [input_message]}, context={"model_provider": "openai", "system_message": "Respond in Italian."})

for message in response["messages"]:

message.pretty_print()

```

```

================================ Human Message ================================

hi

================================== Ai Message ==================================

Ciao! Come posso aiutarti oggi?

```

## Add retry policies

There are many use cases where you may wish for your node to have a custom retry policy, for example if you are calling an API, querying a database, or calling an LLM, etc. LangGraph lets you add retry policies to nodes.

To configure a retry policy, pass the `retry_policy` parameter to the [`add_node`](https://reference.langchain.com/python/langgraph/graph/state/StateGraph/add_node). The `retry_policy` parameter takes in a `RetryPolicy` named tuple object. Below we instantiate a `RetryPolicy` object with the default parameters and associate it with a node:

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

from langgraph.types import RetryPolicy

builder.add_node(

"node_name",

node_function,

retry_policy=RetryPolicy(),

)

```

By default, the `retry_on` parameter uses the `default_retry_on` function, which retries on any exception except for the following:

* `ValueError`

* `TypeError`

* `ArithmeticError`

* `ImportError`

* `LookupError`

* `NameError`

* `SyntaxError`

* `RuntimeError`

* `ReferenceError`

* `StopIteration`

* `StopAsyncIteration`

* `OSError`

In addition, for exceptions from popular http request libraries such as `requests` and `httpx` it only retries on 5xx status codes.

Consider an example in which we are reading from a SQL database. Below we pass two different retry policies to nodes:

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

import sqlite3

from typing_extensions import TypedDict

from langchain.chat_models import init_chat_model

from langgraph.graph import END, MessagesState, StateGraph, START

from langgraph.types import RetryPolicy

from langchain_community.utilities import SQLDatabase

from langchain.messages import AIMessage

db = SQLDatabase.from_uri("sqlite:///:memory:")

model = init_chat_model("claude-haiku-4-5-20251001")

def query_database(state: MessagesState):

query_result = db.run("SELECT * FROM Artist LIMIT 10;")

return {"messages": [AIMessage(content=query_result)]}

def call_model(state: MessagesState):

response = model.invoke(state["messages"])

return {"messages": [response]}

# Define a new graph

builder = StateGraph(MessagesState)

builder.add_node(

"query_database",

query_database,

retry_policy=RetryPolicy(retry_on=sqlite3.OperationalError),

)

builder.add_node("model", call_model, retry_policy=RetryPolicy(max_attempts=5))

builder.add_edge(START, "model")

builder.add_edge("model", "query_database")

builder.add_edge("query_database", END)

graph = builder.compile()

```

## Set node timeouts

Use the `timeout` parameter with [`add_node`](https://reference.langchain.com/python/langgraph/graph/state/StateGraph/add_node) to limit how long a single async node invocation can run. Provide the timeout in seconds or as a `datetime.timedelta`.

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

import asyncio

from typing_extensions import TypedDict

from langgraph.errors import NodeTimeoutError

from langgraph.graph import END, START, StateGraph

class State(TypedDict):

value: str

async def call_model(state: State) -> State:

await asyncio.sleep(2)

return {"value": "done"}

builder = StateGraph(State)

builder.add_node("model", call_model, timeout=1.0)

builder.add_edge(START, "model")

builder.add_edge("model", END)

graph = builder.compile()

try:

await graph.ainvoke({"value": "start"})

except NodeTimeoutError:

print("Node timed out")

```

Node timeouts are supported only for async nodes. If you set `timeout` on a sync node, LangGraph raises an error when the graph is compiled because sync Python execution cannot be safely canceled in-process.

When a node exceeds its timeout, LangGraph raises `NodeTimeoutError`, which subclasses Python's built-in `TimeoutError`. If the node has a `retry_policy` that retries `TimeoutError` or `NodeTimeoutError`, the timed-out attempt is retried. The timeout applies to each attempt independently, so the timer resets for every retry.

Timed-out attempts do not commit their buffered writes. This prevents state updates or child-task scheduling from leaking out after the timeout boundary.

## Configure node timeouts

The `timeout=` parameter on [`add_node`](https://reference.langchain.com/python/langgraph/graph/state/StateGraph/add_node) caps how long a single async node attempt may run. Pass a number (seconds), a `timedelta`, or a [`TimeoutPolicy`](https://reference.langchain.com/python/langgraph/types/TimeoutPolicy) for finer control over run and idle timeouts. When the limit is exceeded, LangGraph raises [`NodeTimeoutError`](https://reference.langchain.com/python/langgraph/errors/NodeTimeoutError) and lets the retry policy decide whether to retry.

Per-node timeouts require `langgraph>=1.2`.

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

from langgraph.types import TimeoutPolicy

builder.add_node(

"call_model",

call_model,

timeout=TimeoutPolicy(run_timeout=120, idle_timeout=30),

)

```

See [Fault tolerance](/oss/python/langgraph/fault-tolerance#timeouts) for the full timeout lifecycle, idle-timeout refresh sources, and `runtime.heartbeat()`.

## Handle node errors

The `error_handler=` parameter on [`add_node`](https://reference.langchain.com/python/langgraph/graph/state/StateGraph/add_node) registers a function that runs after a node fails and all retries are exhausted. The handler receives the current state and a typed [`NodeError`](https://reference.langchain.com/python/langgraph/errors/NodeError) with failure context, and can route to a recovery branch via [`Command`](https://reference.langchain.com/python/langgraph/types/Command):

Node-level error handlers require `langgraph>=1.2`.

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

from langgraph.errors import NodeError

from langgraph.types import Command, RetryPolicy

def payment_error_handler(state: State, error: NodeError) -> Command:

return Command(

update={"status": f"compensated: {error.error}"},

goto="finalize",

)

builder.add_node(

"charge_payment",

charge_payment,

retry_policy=RetryPolicy(max_attempts=3, retry_on=ConnectionError),

error_handler=payment_error_handler,

)

```

See [Fault tolerance](/oss/python/langgraph/fault-tolerance#error-handling) for compensation patterns and `Command` routing.

### Access execution info inside a node

You can access execution identity and retry information via `runtime.execution_info`. This surfaces thread, run, and checkpoint identifiers as well as retry state, without needing to read from `config` directly.

| Attribute | Type | Description |

| ------------------------- | --------------- | ------------------------------------------------------------------------------------------------ |

| `thread_id` | `str \| None` | Thread ID for the current execution. `None` without a checkpointer. |

| `run_id` | `str \| None` | Run ID for the current execution. `None` when not provided in config. |

| `checkpoint_id` | `str` | Checkpoint ID for the current execution. |

| `checkpoint_ns` | `str` | Checkpoint namespace for the current execution. |

| `task_id` | `str` | Task ID for the current execution. |

| `node_attempt` | `int` | Current execution attempt number (1-indexed). `1` on the first try, `2` on the first retry, etc. |

| `node_first_attempt_time` | `float \| None` | Unix timestamp (seconds) of when the first attempt started. Stays the same across retries. |

#### Access thread and run IDs

Use `execution_info` to access the thread ID, run ID, and other identity fields inside a node:

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

from langgraph.graph import StateGraph, START, END

from langgraph.runtime import Runtime

from typing_extensions import TypedDict

class State(TypedDict):

result: str

def my_node(state: State, runtime: Runtime):

info = runtime.execution_info

print(f"Thread: {info.thread_id}, Run: {info.run_id}") # [!code highlight]

return {"result": "done"}

builder = StateGraph(State)

builder.add_node("my_node", my_node)

builder.add_edge(START, "my_node")

builder.add_edge("my_node", END)

graph = builder.compile()

```

#### Adjust behavior based on retry state

When a node has a retry policy, use `execution_info` to inspect the current attempt number and switch to a fallback after the first attempt fails:

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

from langgraph.graph import StateGraph, START, END

from langgraph.runtime import Runtime

from langgraph.types import RetryPolicy

from typing_extensions import TypedDict

class State(TypedDict):

result: str

def my_node(state: State, runtime: Runtime):

info = runtime.execution_info

if info.node_attempt > 1: # [!code highlight]

# use a fallback on retries

return {"result": call_fallback_api()}

return {"result": call_primary_api()}

builder = StateGraph(State)

builder.add_node("my_node", my_node, retry_policy=RetryPolicy(max_attempts=3))

builder.add_edge(START, "my_node")

builder.add_edge("my_node", END)

graph = builder.compile()

```

`execution_info` is available on the `Runtime` object even without a retry policy — `node_attempt` defaults to `1` and `node_first_attempt_time` is set to the time the node starts executing.

### Access server info inside a node

When your graph runs on LangGraph Server, you can access server-specific metadata via `runtime.server_info`. This surfaces the assistant ID, graph ID, and authenticated user without needing to read from config metadata or configurable keys directly.

| Attribute | Type | Description |

| -------------- | ------------------ | ------------------------------------------------------------------------------- |

| `assistant_id` | `str` | The assistant ID for the current deployment. |

| `graph_id` | `str` | The graph ID for the current deployment. |

| `user` | `BaseUser \| None` | The authenticated user, if [custom auth](/langsmith/custom-auth) is configured. |

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

from langgraph.graph import StateGraph, START, END

from langgraph.runtime import Runtime

from typing_extensions import TypedDict

class State(TypedDict):

result: str

def my_node(state: State, runtime: Runtime):

server = runtime.server_info

if server is not None:

print(f"Assistant: {server.assistant_id}, Graph: {server.graph_id}") # [!code highlight]

if server.user is not None:

print(f"User: {server.user.identity}")

return {"result": "done"}

builder = StateGraph(State)

builder.add_node("my_node", my_node)

builder.add_edge(START, "my_node")

builder.add_edge("my_node", END)

graph = builder.compile()

```

`server_info` is `None` when the graph is not running on LangGraph Server (e.g., during local development or testing).

Requires `deepagents>=0.5.0` (or `langgraph>=1.1.5`) for `runtime.execution_info` and `runtime.server_info`.

### Access drain state inside a node

When a [graceful shutdown](/oss/python/langgraph/durable-execution#graceful-shutdown) has been requested, `runtime.drain_requested` is `True`. Read this inside a node to skip expensive work before the next superstep boundary:

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

from langgraph.runtime import Runtime

def my_node(state: State, runtime: Runtime) -> State:

if runtime.drain_requested: # [!code highlight]

return {"status": "skipped", "reason": runtime.drain_reason}

return {"status": do_work()}

```

| Property | Type | Description |

| ----------------- | ------------- | ------------------------------------------------------------------------------------ |

| `drain_requested` | `bool` | `True` if `RunControl.request_drain()` has been called for this run. |

| `drain_reason` | `str \| None` | The reason string passed to `request_drain()`, or `None` if drain was not requested. |

Requires `langgraph>=1.2`. See [Graceful shutdown](/oss/python/langgraph/durable-execution#graceful-shutdown) for the full `RunControl` API.

## Add node caching

Node caching is useful in cases where you want to avoid repeating operations, like when doing something expensive (either in terms of time or cost). LangGraph lets you add individualized caching policies to nodes in a graph.

To configure a cache policy, pass the `cache_policy` parameter to the [`add_node`](https://reference.langchain.com/python/langgraph/graph/state/StateGraph/add_node) function. In the following example, a [`CachePolicy`](https://reference.langchain.com/python/langgraph/types/CachePolicy) object is instantiated with a time to live of 120 seconds and the default `key_func` generator. Then it is associated with a node:

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

from langgraph.types import CachePolicy

builder.add_node(

"node_name",

node_function,

cache_policy=CachePolicy(ttl=120),

)

```

Then, to enable node-level caching for a graph, set the `cache` argument when compiling the graph. The example below uses `InMemoryCache` to set up a graph with in-memory cache, but `SqliteCache` is also available.

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

from langgraph.cache.memory import InMemoryCache

graph = builder.compile(cache=InMemoryCache())

```

## Create a sequence of steps

**Prerequisites**

This guide assumes familiarity with the above section on [state](#define-and-update-state).

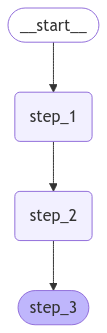

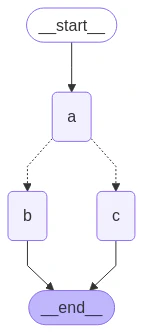

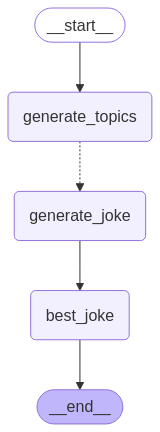

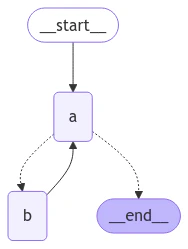

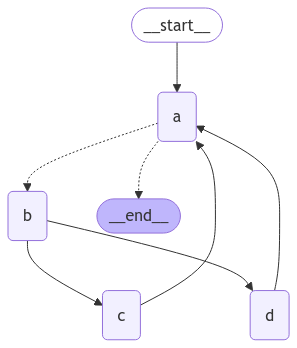

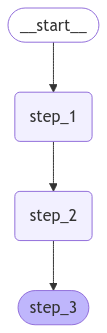

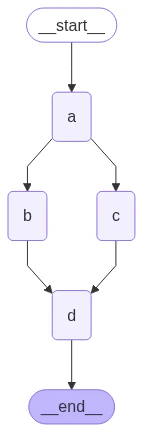

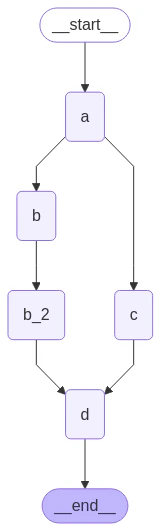

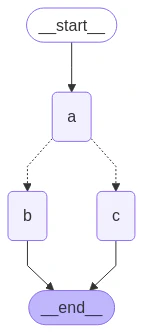

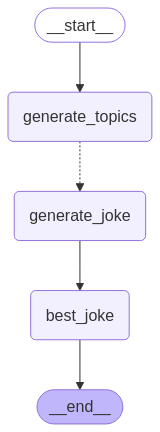

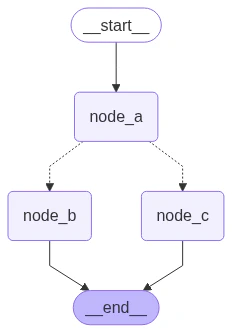

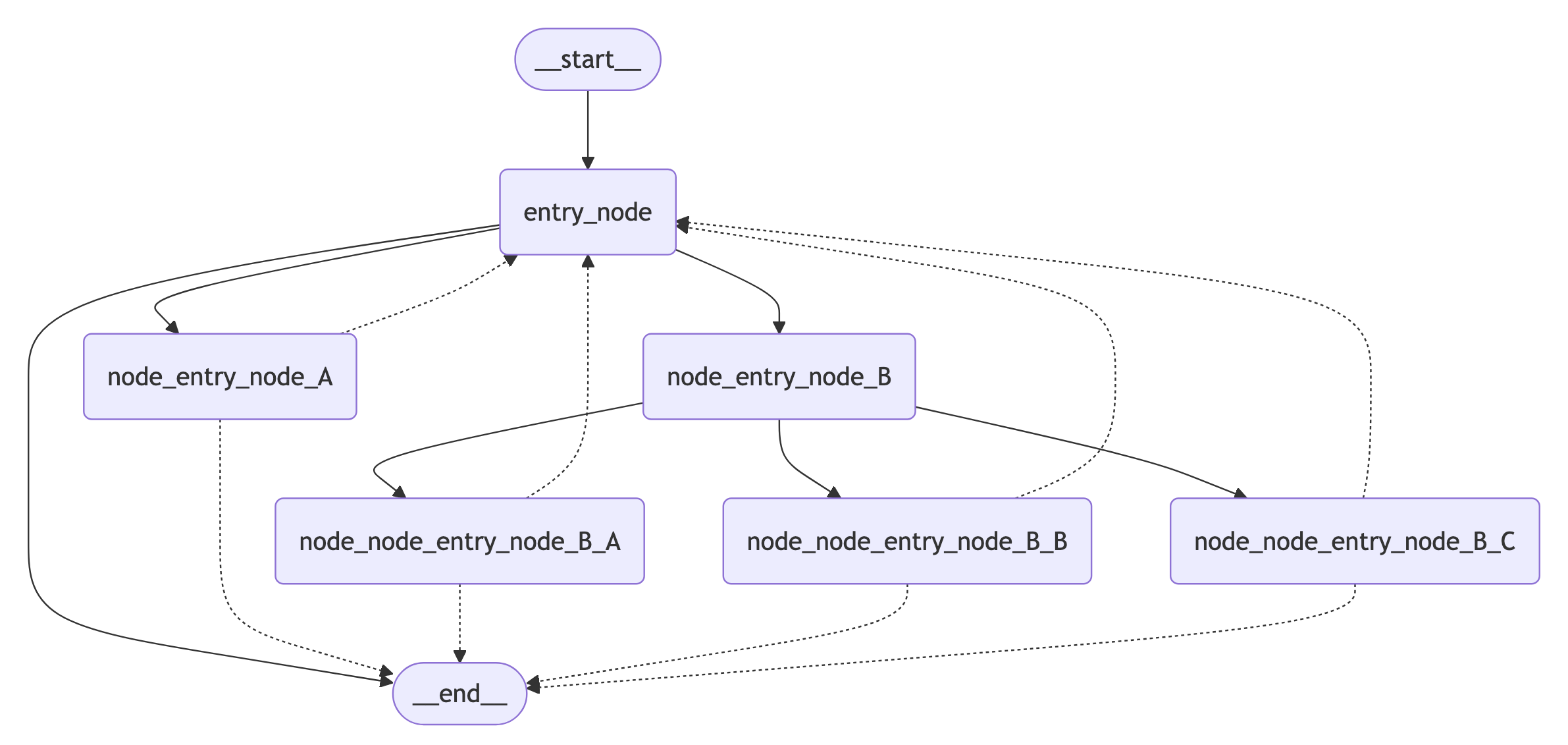

Here we demonstrate how to construct a simple sequence of steps. We will show:

1. How to build a sequential graph

2. Built-in short-hand for constructing similar graphs.

To add a sequence of nodes, we use the [`add_node`](https://reference.langchain.com/python/langgraph/graph/state/StateGraph/add_node) and [`add_edge`](https://reference.langchain.com/python/langgraph/pregel/_draw/add_edge) methods of our [graph](/oss/python/langgraph/graph-api#stategraph):

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

from langgraph.graph import START, StateGraph

builder = StateGraph(State)

# Add nodes

builder.add_node(step_1)

builder.add_node(step_2)

builder.add_node(step_3)

# Add edges

builder.add_edge(START, "step_1")

builder.add_edge("step_1", "step_2")

builder.add_edge("step_2", "step_3")

```

We can also use the built-in shorthand `.add_sequence`:

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

builder = StateGraph(State).add_sequence([step_1, step_2, step_3])

builder.add_edge(START, "step_1")

```

LangGraph makes it easy to add an underlying persistence layer to your application.

This allows state to be checkpointed in between the execution of nodes, so your LangGraph nodes govern:

* How state updates are [checkpointed](/oss/python/langgraph/persistence)

* How interruptions are resumed in [human-in-the-loop](/oss/python/langgraph/interrupts) workflows

* How we can "rewind" and branch-off executions using LangGraph's [time travel](/oss/python/langgraph/use-time-travel) features

They also determine how execution steps are [streamed](/oss/python/langgraph/streaming), and how your application is visualized and debugged using [Studio](/langsmith/studio).

Let's demonstrate an end-to-end example. We will create a sequence of three steps:

1. Populate a value in a key of the state

2. Update the same value

3. Populate a different value

Let's first define our [state](/oss/python/langgraph/graph-api#state). This governs the [schema of the graph](/oss/python/langgraph/graph-api#schema), and can also specify how to apply updates. See [Process state updates with reducers](#process-state-updates-with-reducers) for more detail.

In our case, we will just keep track of two values:

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

from typing_extensions import TypedDict

class State(TypedDict):

value_1: str

value_2: int

```

Our [nodes](/oss/python/langgraph/graph-api#nodes) are just Python functions that read our graph's state and make updates to it. The first argument to this function will always be the state:

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

def step_1(state: State):

return {"value_1": "a"}

def step_2(state: State):

current_value_1 = state["value_1"]

return {"value_1": f"{current_value_1} b"}

def step_3(state: State):

return {"value_2": 10}

```

Note that when issuing updates to the state, each node can just specify the value of the key it wishes to update.

By default, this will **overwrite** the value of the corresponding key. You can also use [reducers](/oss/python/langgraph/graph-api#reducers) to control how updates are processed—for example, you can append successive updates to a key instead. See [Process state updates with reducers](#process-state-updates-with-reducers) for more detail.

Finally, we define the graph. We use [StateGraph](/oss/python/langgraph/graph-api#stategraph) to define a graph that operates on this state.

We will then use [`add_node`](/oss/python/langgraph/graph-api#messagesstate) and [`add_edge`](/oss/python/langgraph/graph-api#edges) to populate our graph and define its control flow.

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

from langgraph.graph import START, StateGraph

builder = StateGraph(State)

# Add nodes

builder.add_node(step_1)

builder.add_node(step_2)

builder.add_node(step_3)

# Add edges

builder.add_edge(START, "step_1")

builder.add_edge("step_1", "step_2")

builder.add_edge("step_2", "step_3")

```

**Specifying custom names**

You can specify custom names for nodes using [`add_node`](https://reference.langchain.com/python/langgraph/graph/state/StateGraph/add_node):

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

builder.add_node("my_node", step_1)

```

Note that:

* [`add_edge`](https://reference.langchain.com/python/langgraph/pregel/_draw/add_edge) takes the names of nodes, which for functions defaults to `node.__name__`.

* We must specify the entry point of the graph. For this we add an edge with the [START node](/oss/python/langgraph/graph-api#start-node).

* The graph halts when there are no more nodes to execute.

We next [compile](/oss/python/langgraph/graph-api#compiling-your-graph) our graph. This provides a few basic checks on the structure of the graph (e.g., identifying orphaned nodes). If we were adding persistence to our application via a [checkpointer](/oss/python/langgraph/persistence), it would also be passed in here.

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

graph = builder.compile()

```

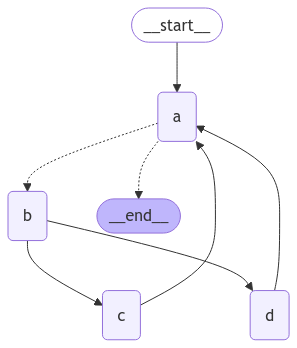

LangGraph provides built-in utilities for visualizing your graph. Let's inspect our sequence. See [Visualize your graph](#visualize-your-graph) for detail on visualization.

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

from IPython.display import Image, display

display(Image(graph.get_graph().draw_mermaid_png()))

```

In this case, our graph just executes a single node. Let's proceed with a simple invocation:

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

from langchain.messages import HumanMessage

result = graph.invoke({"messages": [HumanMessage("Hi")]})

result

```

```

{'messages': [HumanMessage(content='Hi'), AIMessage(content='Hello!')], 'extra_field': 10}

```

Note that:

* We kicked off invocation by updating a single key of the state.

* We receive the entire state in the invocation result.

For convenience, we frequently inspect the content of [message objects](https://python.langchain.com/docs/concepts/messages/) via pretty-print:

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

for message in result["messages"]:

message.pretty_print()

```

```

================================ Human Message ================================

Hi

================================== Ai Message ==================================

Hello!

```

### Process state updates with reducers

Each key in the state can have its own independent [reducer](/oss/python/langgraph/graph-api#reducers) function, which controls how updates from nodes are applied. If no reducer function is explicitly specified then it is assumed that all updates to the key should override it.

For `TypedDict` state schemas, we can define reducers by annotating the corresponding field of the state with a reducer function.

In the earlier example, our node updated the `"messages"` key in the state by appending a message to it. Below, we add a reducer to this key, such that updates are automatically appended:

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

from typing_extensions import Annotated

def add(left, right):

"""Can also import `add` from the `operator` built-in."""

return left + right

class State(TypedDict):

messages: Annotated[list[AnyMessage], add] # [!code highlight]

extra_field: int

```

Now our node can be simplified:

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

def node(state: State):

new_message = AIMessage("Hello!")

return {"messages": [new_message], "extra_field": 10} # [!code highlight]

```

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

from langgraph.graph import START

graph = StateGraph(State).add_node(node).add_edge(START, "node").compile()

result = graph.invoke({"messages": [HumanMessage("Hi")]})

for message in result["messages"]:

message.pretty_print()

```

```

================================ Human Message ================================

Hi

================================== Ai Message ==================================

Hello!

```

#### MessagesState

In practice, there are additional considerations for updating lists of messages:

* We may wish to update an existing message in the state.

* We may want to accept short-hands for [message formats](/oss/python/langgraph/graph-api#using-messages-in-your-graph), such as [OpenAI format](https://python.langchain.com/docs/concepts/messages/#openai-format).

LangGraph includes a built-in reducer [`add_messages`](https://reference.langchain.com/python/langgraph/graph/message/add_messages) that handles these considerations:

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

from langgraph.graph.message import add_messages

class State(TypedDict):

messages: Annotated[list[AnyMessage], add_messages] # [!code highlight]

extra_field: int

def node(state: State):

new_message = AIMessage("Hello!")

return {"messages": [new_message], "extra_field": 10}

graph = StateGraph(State).add_node(node).set_entry_point("node").compile()

```

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

input_message = {"role": "user", "content": "Hi"} # [!code highlight]

result = graph.invoke({"messages": [input_message]})

for message in result["messages"]:

message.pretty_print()

```

```

================================ Human Message ================================

Hi

================================== Ai Message ==================================

Hello!

```

This is a versatile representation of state for applications involving [chat models](https://python.langchain.com/docs/concepts/chat_models/). LangGraph includes a prebuilt `MessagesState` for convenience, so that we can have:

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

from langgraph.graph import MessagesState

class State(MessagesState):

extra_field: int

```

### Bypass reducers with `Overwrite`

In some cases, you may want to bypass a reducer and directly overwrite a state value. LangGraph provides the [`Overwrite`](https://reference.langchain.com/python/langgraph/types/) type for this purpose. When a node returns a value wrapped with `Overwrite`, the reducer is bypassed and the channel is set directly to that value.

This is useful when you want to reset or replace accumulated state rather than merge it with existing values.

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

from langgraph.graph import StateGraph, START, END

from langgraph.types import Overwrite

from typing_extensions import Annotated, TypedDict

import operator

class State(TypedDict):

messages: Annotated[list, operator.add]

def add_message(state: State):

return {"messages": ["first message"]}

def replace_messages(state: State):

# Bypass the reducer and replace the entire messages list

return {"messages": Overwrite(["replacement message"])}

builder = StateGraph(State)

builder.add_node("add_message", add_message)

builder.add_node("replace_messages", replace_messages)

builder.add_edge(START, "add_message")

builder.add_edge("add_message", "replace_messages")

builder.add_edge("replace_messages", END)

graph = builder.compile()

result = graph.invoke({"messages": ["initial"]})

print(result["messages"])

```

```

['replacement message']

```

You can also use JSON format with the special key `"__overwrite__"`:

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

def replace_messages(state: State):

return {"messages": {"__overwrite__": ["replacement message"]}}

```

When nodes execute in parallel, only one node can use `Overwrite` on the same state key in a given super-step. If multiple nodes attempt to overwrite the same key in the same super-step, an `InvalidUpdateError` will be raised.

### Define input and output schemas

By default, `StateGraph` operates with a single schema, and all nodes are expected to communicate using that schema. However, it's also possible to define distinct input and output schemas for a graph.

When distinct schemas are specified, an internal schema will still be used for communication between nodes. The input schema ensures that the provided input matches the expected structure, while the output schema filters the internal data to return only the relevant information according to the defined output schema.

Below, we'll see how to define distinct input and output schema.

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

from langgraph.graph import StateGraph, START, END

from typing_extensions import TypedDict

# Define the schema for the input

class InputState(TypedDict):

question: str

# Define the schema for the output

class OutputState(TypedDict):

answer: str

# Define the overall schema, combining both input and output

class OverallState(InputState, OutputState):

pass

# Define the node that processes the input and generates an answer

def answer_node(state: InputState):

# Example answer and an extra key

return {"answer": "bye", "question": state["question"]}

# Build the graph with input and output schemas specified

builder = StateGraph(OverallState, input_schema=InputState, output_schema=OutputState)

builder.add_node(answer_node) # Add the answer node

builder.add_edge(START, "answer_node") # Define the starting edge

builder.add_edge("answer_node", END) # Define the ending edge

graph = builder.compile() # Compile the graph

# Invoke the graph with an input and print the result

print(graph.invoke({"question": "hi"}))

```

```

{'answer': 'bye'}

```

Notice that the output of invoke only includes the output schema.

### Pass private state between nodes

In some cases, you may want nodes to exchange information that is crucial for intermediate logic but doesn't need to be part of the main schema of the graph. This private data is not relevant to the overall input/output of the graph and should only be shared between certain nodes.

Below, we'll create an example sequential graph consisting of three nodes (node\_1, node\_2 and node\_3), where private data is passed between the first two steps (node\_1 and node\_2), while the third step (node\_3) only has access to the public overall state.

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

from langgraph.graph import StateGraph, START, END

from typing_extensions import TypedDict

# The overall state of the graph (this is the public state shared across nodes)

class OverallState(TypedDict):

a: str

# Output from node_1 contains private data that is not part of the overall state

class Node1Output(TypedDict):

private_data: str

# The private data is only shared between node_1 and node_2

def node_1(state: OverallState) -> Node1Output:

output = {"private_data": "set by node_1"}

print(f"Entered node `node_1`:\n\tInput: {state}.\n\tReturned: {output}")

return output

# Node 2 input only requests the private data available after node_1

class Node2Input(TypedDict):

private_data: str

def node_2(state: Node2Input) -> OverallState:

output = {"a": "set by node_2"}

print(f"Entered node `node_2`:\n\tInput: {state}.\n\tReturned: {output}")

return output

# Node 3 only has access to the overall state (no access to private data from node_1)

def node_3(state: OverallState) -> OverallState:

output = {"a": "set by node_3"}

print(f"Entered node `node_3`:\n\tInput: {state}.\n\tReturned: {output}")

return output

# Connect nodes in a sequence

# node_2 accepts private data from node_1, whereas

# node_3 does not see the private data.

builder = StateGraph(OverallState).add_sequence([node_1, node_2, node_3])

builder.add_edge(START, "node_1")

graph = builder.compile()

# Invoke the graph with the initial state

response = graph.invoke(

{

"a": "set at start",

}

)

print()

print(f"Output of graph invocation: {response}")

```

```

Entered node `node_1`:

Input: {'a': 'set at start'}.

Returned: {'private_data': 'set by node_1'}

Entered node `node_2`:

Input: {'private_data': 'set by node_1'}.

Returned: {'a': 'set by node_2'}

Entered node `node_3`:

Input: {'a': 'set by node_2'}.

Returned: {'a': 'set by node_3'}

Output of graph invocation: {'a': 'set by node_3'}

```

### Use pydantic models for graph state

A [StateGraph](https://langchain-ai.github.io/langgraph/reference/graphs.md#langgraph.graph.StateGraph) accepts a [`state_schema`](https://reference.langchain.com/python/langchain/middleware/#langchain.agents.middleware.AgentMiddleware.state_schema) argument on initialization that specifies the "shape" of the state that the nodes in the graph can access and update.

In our examples, we typically use a python-native `TypedDict` or [`dataclass`](https://docs.python.org/3/library/dataclasses.html) for `state_schema`, but [`state_schema`](https://reference.langchain.com/python/langchain/middleware/#langchain.agents.middleware.AgentMiddleware.state_schema) can be any [type](https://docs.python.org/3/library/stdtypes.html#type-objects).

Here, we'll see how a [Pydantic BaseModel](https://docs.pydantic.dev/latest/api/base_model/) can be used for [`state_schema`](https://reference.langchain.com/python/langchain/middleware/#langchain.agents.middleware.AgentMiddleware.state_schema) to add run-time validation on **inputs**.

**Known Limitations**

* Currently, the output of the graph will **NOT** be an instance of a pydantic model.

* Run-time validation only occurs on inputs to the first node in the graph, not on subsequent nodes or outputs.

* The validation error trace from pydantic does not show which node the error arises in.

* Pydantic's recursive validation can be slow. For performance-sensitive applications, you may want to consider using a `dataclass` instead.

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

from langgraph.graph import StateGraph, START, END

from typing_extensions import TypedDict

from pydantic import BaseModel

# The overall state of the graph (this is the public state shared across nodes)

class OverallState(BaseModel):

a: str

def node(state: OverallState):

return {"a": "goodbye"}

# Build the state graph

builder = StateGraph(OverallState)

builder.add_node(node) # node_1 is the first node

builder.add_edge(START, "node") # Start the graph with node_1

builder.add_edge("node", END) # End the graph after node_1

graph = builder.compile()

# Test the graph with a valid input

graph.invoke({"a": "hello"})

```

Invoke the graph with an **invalid** input

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

try:

graph.invoke({"a": 123}) # Should be a string

except Exception as e:

print("An exception was raised because `a` is an integer rather than a string.")

print(e)

```

```

An exception was raised because `a` is an integer rather than a string.

1 validation error for OverallState

a

Input should be a valid string [type=string_type, input_value=123, input_type=int]

For further information visit https://errors.pydantic.dev/2.9/v/string_type

```

See below for additional features of Pydantic model state:

When using Pydantic models as state schemas, it's important to understand how serialization works, especially when:

* Passing Pydantic objects as inputs

* Receiving outputs from the graph

* Working with nested Pydantic models

Let's see these behaviors in action.

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

from langgraph.graph import StateGraph, START, END

from pydantic import BaseModel

class NestedModel(BaseModel):

value: str

class ComplexState(BaseModel):

text: str

count: int

nested: NestedModel

def process_node(state: ComplexState):

# Node receives a validated Pydantic object

print(f"Input state type: {type(state)}")

print(f"Nested type: {type(state.nested)}")

# Return a dictionary update

return {"text": state.text + " processed", "count": state.count + 1}

# Build the graph

builder = StateGraph(ComplexState)

builder.add_node("process", process_node)

builder.add_edge(START, "process")

builder.add_edge("process", END)

graph = builder.compile()

# Create a Pydantic instance for input

input_state = ComplexState(text="hello", count=0, nested=NestedModel(value="test"))

print(f"Input object type: {type(input_state)}")

# Invoke graph with a Pydantic instance

result = graph.invoke(input_state)

print(f"Output type: {type(result)}")

print(f"Output content: {result}")

# Convert back to Pydantic model if needed

output_model = ComplexState(**result)

print(f"Converted back to Pydantic: {type(output_model)}")

```

Pydantic performs runtime type coercion for certain data types. This can be helpful but also lead to unexpected behavior if you're not aware of it.

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

from langgraph.graph import StateGraph, START, END

from pydantic import BaseModel

class CoercionExample(BaseModel):

# Pydantic will coerce string numbers to integers

number: int

# Pydantic will parse string booleans to bool

flag: bool

def inspect_node(state: CoercionExample):

print(f"number: {state.number} (type: {type(state.number)})")

print(f"flag: {state.flag} (type: {type(state.flag)})")

return {}

builder = StateGraph(CoercionExample)

builder.add_node("inspect", inspect_node)

builder.add_edge(START, "inspect")

builder.add_edge("inspect", END)

graph = builder.compile()

# Demonstrate coercion with string inputs that will be converted

result = graph.invoke({"number": "42", "flag": "true"})

# This would fail with a validation error

try:

graph.invoke({"number": "not-a-number", "flag": "true"})

except Exception as e:

print(f"\nExpected validation error: {e}")

```

When working with LangChain message types in your state schema, there are important considerations for serialization. You should use `AnyMessage` (rather than `BaseMessage`) for proper serialization/deserialization when using message objects over the wire.

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

from langgraph.graph import StateGraph, START, END

from pydantic import BaseModel

from langchain.messages import HumanMessage, AIMessage, AnyMessage

from typing import List

class ChatState(BaseModel):

messages: List[AnyMessage]

context: str

def add_message(state: ChatState):

return {"messages": state.messages + [AIMessage(content="Hello there!")]}

builder = StateGraph(ChatState)

builder.add_node("add_message", add_message)

builder.add_edge(START, "add_message")

builder.add_edge("add_message", END)

graph = builder.compile()

# Create input with a message

initial_state = ChatState(

messages=[HumanMessage(content="Hi")], context="Customer support chat"

)

result = graph.invoke(initial_state)

print(f"Output: {result}")

# Convert back to Pydantic model to see message types

output_model = ChatState(**result)

for i, msg in enumerate(output_model.messages):

print(f"Message {i}: {type(msg).__name__} - {msg.content}")

```

## Add runtime configuration

Sometimes you want to be able to configure your graph when calling it. For example, you might want to be able to specify what LLM or system prompt to use at runtime, *without polluting the graph state with these parameters*.

To add runtime configuration:

1. Specify a schema for your configuration

2. Add the configuration to the function signature for nodes or conditional edges

3. Pass the configuration into the graph.

See below for a simple example:

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

from langgraph.graph import END, StateGraph, START

from langgraph.runtime import Runtime

from typing_extensions import TypedDict

# 1. Specify config schema

class ContextSchema(TypedDict):

my_runtime_value: str

# 2. Define a graph that accesses the config in a node

class State(TypedDict):

my_state_value: str

def node(state: State, runtime: Runtime[ContextSchema]): # [!code highlight]

if runtime.context["my_runtime_value"] == "a": # [!code highlight]

return {"my_state_value": 1}

elif runtime.context["my_runtime_value"] == "b": # [!code highlight]

return {"my_state_value": 2}

else:

raise ValueError("Unknown values.")

builder = StateGraph(State, context_schema=ContextSchema) # [!code highlight]

builder.add_node(node)

builder.add_edge(START, "node")

builder.add_edge("node", END)

graph = builder.compile()

# 3. Pass in configuration at runtime:

print(graph.invoke({}, context={"my_runtime_value": "a"})) # [!code highlight]

print(graph.invoke({}, context={"my_runtime_value": "b"})) # [!code highlight]

```

```

{'my_state_value': 1}

{'my_state_value': 2}

```

Below we demonstrate a practical example in which we configure what LLM to use at runtime. We will use both OpenAI and Anthropic models.

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

from dataclasses import dataclass

from langchain.chat_models import init_chat_model

from langgraph.graph import MessagesState, END, StateGraph, START

from langgraph.runtime import Runtime

from typing_extensions import TypedDict

@dataclass

class ContextSchema:

model_provider: str = "anthropic"

MODELS = {

"anthropic": init_chat_model("claude-haiku-4-5-20251001"),

"openai": init_chat_model("gpt-5.4-mini"),

}

def call_model(state: MessagesState, runtime: Runtime[ContextSchema]):

model = MODELS[runtime.context.model_provider]

response = model.invoke(state["messages"])

return {"messages": [response]}

builder = StateGraph(MessagesState, context_schema=ContextSchema)

builder.add_node("model", call_model)

builder.add_edge(START, "model")

builder.add_edge("model", END)

graph = builder.compile()

# Usage

input_message = {"role": "user", "content": "hi"}

# With no configuration, uses default (Anthropic)

response_1 = graph.invoke({"messages": [input_message]}, context=ContextSchema())["messages"][-1]

# Or, can set OpenAI

response_2 = graph.invoke({"messages": [input_message]}, context={"model_provider": "openai"})["messages"][-1]

print(response_1.response_metadata["model_name"])

print(response_2.response_metadata["model_name"])

```

```

claude-haiku-4-5-20251001

gpt-5.4-mini

```

Below we demonstrate a practical example in which we configure two parameters: the LLM and system message to use at runtime.

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

from dataclasses import dataclass

from langchain.chat_models import init_chat_model

from langchain.messages import SystemMessage

from langgraph.graph import END, MessagesState, StateGraph, START

from langgraph.runtime import Runtime

from typing_extensions import TypedDict

@dataclass

class ContextSchema:

model_provider: str = "anthropic"

system_message: str | None = None

MODELS = {

"anthropic": init_chat_model("claude-haiku-4-5-20251001"),

"openai": init_chat_model("gpt-5.4-mini"),

}

def call_model(state: MessagesState, runtime: Runtime[ContextSchema]):

model = MODELS[runtime.context.model_provider]

messages = state["messages"]

if (system_message := runtime.context.system_message):

messages = [SystemMessage(system_message)] + messages

response = model.invoke(messages)

return {"messages": [response]}

builder = StateGraph(MessagesState, context_schema=ContextSchema)

builder.add_node("model", call_model)

builder.add_edge(START, "model")

builder.add_edge("model", END)

graph = builder.compile()

# Usage

input_message = {"role": "user", "content": "hi"}

response = graph.invoke({"messages": [input_message]}, context={"model_provider": "openai", "system_message": "Respond in Italian."})

for message in response["messages"]:

message.pretty_print()

```

```

================================ Human Message ================================

hi

================================== Ai Message ==================================

Ciao! Come posso aiutarti oggi?

```

## Add retry policies

There are many use cases where you may wish for your node to have a custom retry policy, for example if you are calling an API, querying a database, or calling an LLM, etc. LangGraph lets you add retry policies to nodes.

To configure a retry policy, pass the `retry_policy` parameter to the [`add_node`](https://reference.langchain.com/python/langgraph/graph/state/StateGraph/add_node). The `retry_policy` parameter takes in a `RetryPolicy` named tuple object. Below we instantiate a `RetryPolicy` object with the default parameters and associate it with a node:

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

from langgraph.types import RetryPolicy

builder.add_node(

"node_name",

node_function,

retry_policy=RetryPolicy(),

)

```

By default, the `retry_on` parameter uses the `default_retry_on` function, which retries on any exception except for the following:

* `ValueError`

* `TypeError`

* `ArithmeticError`

* `ImportError`

* `LookupError`

* `NameError`

* `SyntaxError`

* `RuntimeError`

* `ReferenceError`

* `StopIteration`

* `StopAsyncIteration`

* `OSError`

In addition, for exceptions from popular http request libraries such as `requests` and `httpx` it only retries on 5xx status codes.

Consider an example in which we are reading from a SQL database. Below we pass two different retry policies to nodes:

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

import sqlite3

from typing_extensions import TypedDict

from langchain.chat_models import init_chat_model

from langgraph.graph import END, MessagesState, StateGraph, START

from langgraph.types import RetryPolicy

from langchain_community.utilities import SQLDatabase

from langchain.messages import AIMessage

db = SQLDatabase.from_uri("sqlite:///:memory:")

model = init_chat_model("claude-haiku-4-5-20251001")

def query_database(state: MessagesState):

query_result = db.run("SELECT * FROM Artist LIMIT 10;")

return {"messages": [AIMessage(content=query_result)]}

def call_model(state: MessagesState):

response = model.invoke(state["messages"])

return {"messages": [response]}

# Define a new graph

builder = StateGraph(MessagesState)

builder.add_node(

"query_database",

query_database,

retry_policy=RetryPolicy(retry_on=sqlite3.OperationalError),

)

builder.add_node("model", call_model, retry_policy=RetryPolicy(max_attempts=5))

builder.add_edge(START, "model")

builder.add_edge("model", "query_database")

builder.add_edge("query_database", END)

graph = builder.compile()

```

## Set node timeouts

Use the `timeout` parameter with [`add_node`](https://reference.langchain.com/python/langgraph/graph/state/StateGraph/add_node) to limit how long a single async node invocation can run. Provide the timeout in seconds or as a `datetime.timedelta`.

```python theme={"theme":{"light":"catppuccin-latte","dark":"catppuccin-mocha"}}

import asyncio

from typing_extensions import TypedDict

from langgraph.errors import NodeTimeoutError

from langgraph.graph import END, START, StateGraph

class State(TypedDict):

value: str

async def call_model(state: State) -> State:

await asyncio.sleep(2)

return {"value": "done"}

builder = StateGraph(State)

builder.add_node("model", call_model, timeout=1.0)

builder.add_edge(START, "model")

builder.add_edge("model", END)

graph = builder.compile()

try:

await graph.ainvoke({"value": "start"})

except NodeTimeoutError:

print("Node timed out")

```

Node timeouts are supported only for async nodes. If you set `timeout` on a sync node, LangGraph raises an error when the graph is compiled because sync Python execution cannot be safely canceled in-process.

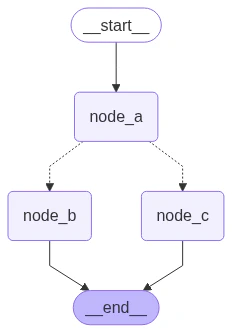

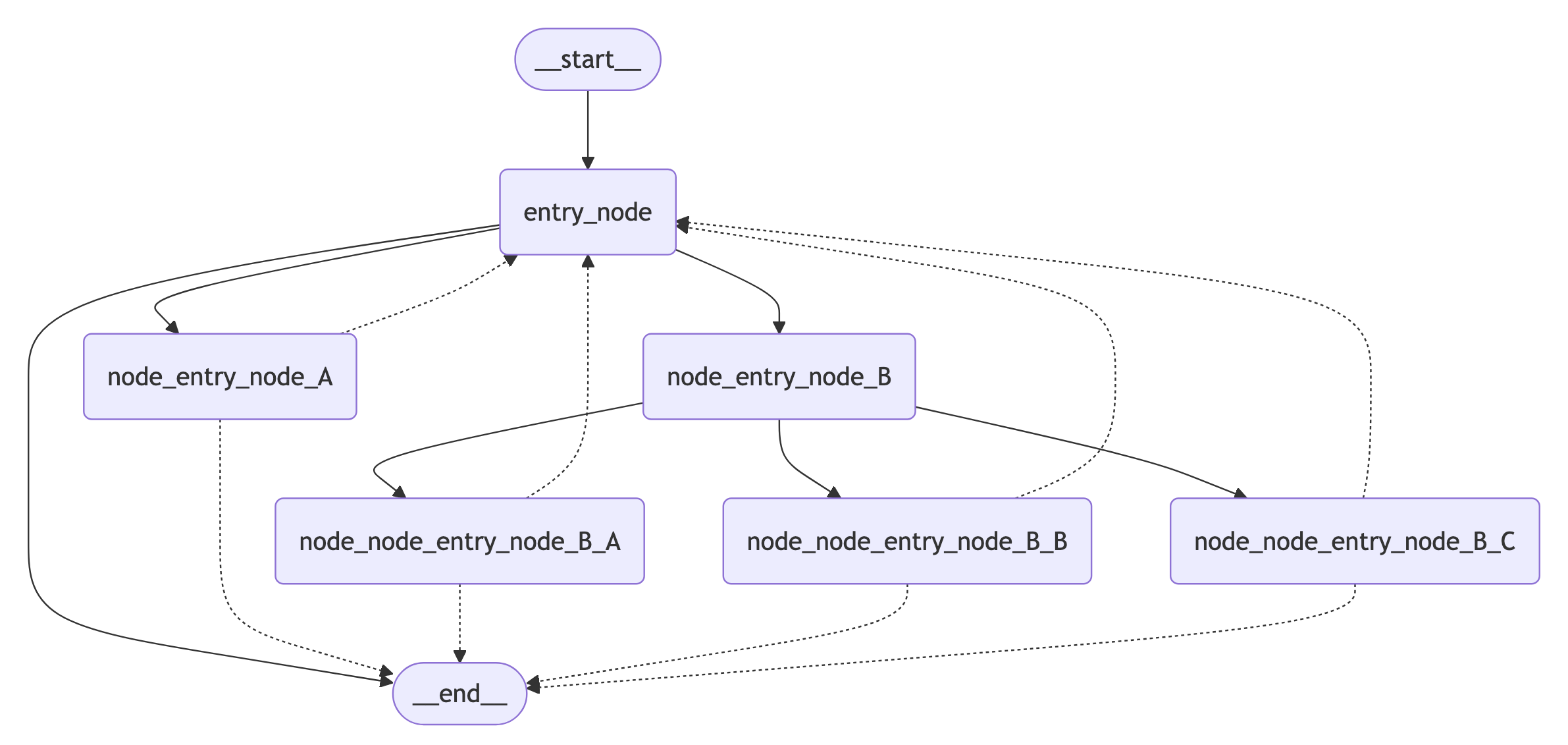

When a node exceeds its timeout, LangGraph raises `NodeTimeoutError`, which subclasses Python's built-in `TimeoutError`. If the node has a `retry_policy` that retries `TimeoutError` or `NodeTimeoutError`, the timed-out attempt is retried. The timeout applies to each attempt independently, so the timer resets for every retry.