Enable human intervention

To review, edit, and approve tool calls in an agent or workflow, use interrupts to pause a graph and wait for human input. Interrupts use LangGraph’s persistence layer, which saves the graph state, to indefinitely pause graph execution until you resume.

Pause using

Dynamic interrupts (also known as dynamic breakpoints) are triggered based on the current state of the graph. You can set dynamic interrupts by calling

Resume using the

When the

Pause the graph before a critical step, such as an API call, to review and approve the action. If the action is rejected, you can prevent the graph from executing the step, and potentially take an alternative action.

Pause the graph before a critical step, such as an API call, to review and approve the action. If the action is rejected, you can prevent the graph from executing the step, and potentially take an alternative action.

To add a human approval step to a tool:

To add a human approval step to a tool:

For more information about human-in-the-loop workflows, see the Human-in-the-Loop conceptual guide.

Pause using interrupt

Dynamic interrupts (also known as dynamic breakpoints) are triggered based on the current state of the graph. You can set dynamic interrupts by calling interrupt function in the appropriate place. The graph will pause, which allows for human intervention, and then resumes the graph with their input. It’s useful for tasks like approvals, edits, or gathering additional context.

To use interrupt in your graph, you need to:

- Specify a checkpointer to save the graph state after each step.

- Call

interrupt()in the appropriate place. See the Common Patterns section for examples. - Run the graph with a thread ID until the

interruptis hit. - Resume execution using

invoke/stream(see TheCommandprimitive).

interrupt(...)pauses execution athumanNode, surfacing the given payload to a human.- Any JSON serializable value can be passed to the

interruptfunction. Here, an object containing the text to revise. - Once resumed, the return value of

interrupt(...)is the human-provided input, which is used to update the state. - A checkpointer is required to persist graph state. In production, this should be durable (e.g., backed by a database).

- The graph is invoked with some initial state.

- When the graph hits the interrupt, it returns an object with

__interrupt__containing the payload and metadata. - The graph is resumed with a

Command({ resume: ... }), injecting the human’s input and continuing execution.

Extended example: using `interrupt`

Extended example: using `interrupt`

interrupt(...)pauses execution athumanNode, surfacing the given payload to a human.- Any JSON serializable value can be passed to the

interruptfunction. Here, an object containing the text to revise. - Once resumed, the return value of

interrupt(...)is the human-provided input, which is used to update the state. - A checkpointer is required to persist graph state. In production, this should be durable (e.g., backed by a database).

- The graph is invoked with some initial state.

- When the graph hits the interrupt, it returns an object with

__interrupt__containing the payload and metadata. - The graph is resumed with a

Command({ resume: ... }), injecting the human’s input and continuing execution.

New in 0.4.0

__interrupt__ is a special key that will be returned when running the graph if the graph is interrupted. Support for __interrupt__ in invoke has been added in version 0.4.0. If you’re on an older version, you will only see __interrupt__ in the result if you use stream. You can also use graph.getState(config) to get the interrupt value(s).Interrupts are both powerful and ergonomic, but it’s important to note that they do not automatically resume execution from the interrupt point. Instead, they rerun the entire where the interrupt was used. For this reason, interrupts are typically best placed at the state of a node or in a dedicated node.

Resume using the Command primitive

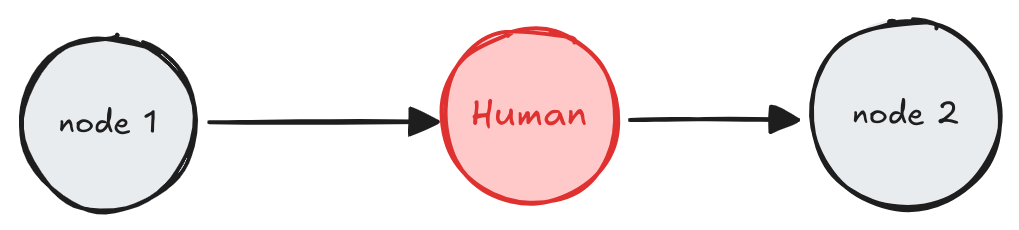

When the interrupt function is used within a graph, execution pauses at that point and awaits user input.

To resume execution, use the Command primitive, which can be supplied via the invoke or stream methods. The graph resumes execution from the beginning of the node where interrupt(...) was initially called. This time, the interrupt function will return the value provided in Command(resume=value) rather than pausing again. All code from the beginning of the node to the interrupt will be re-executed.

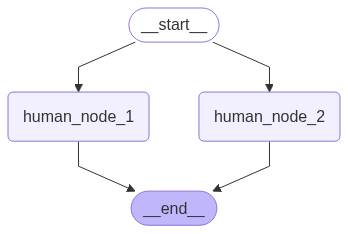

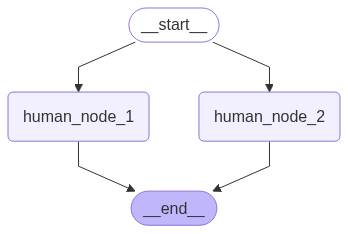

Resume multiple interrupts with one invocation

When nodes with interrupt conditions are run in parallel, it’s possible to have multiple interrupts in the task queue. For example, the following graph has two nodes run in parallel that require human input:

Common patterns

Below we show different design patterns that can be implemented usinginterrupt and Command.

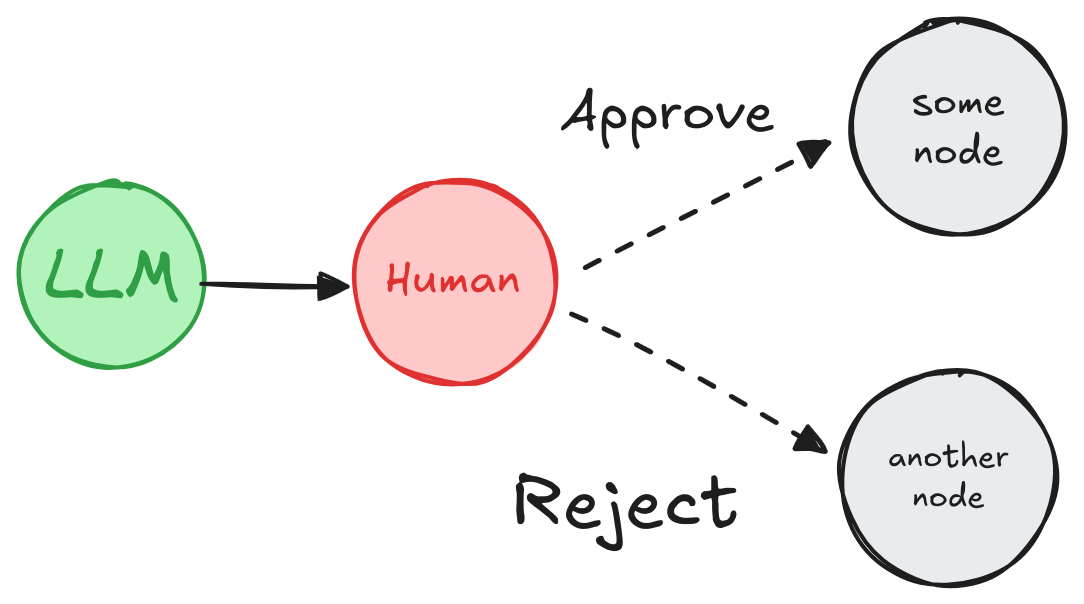

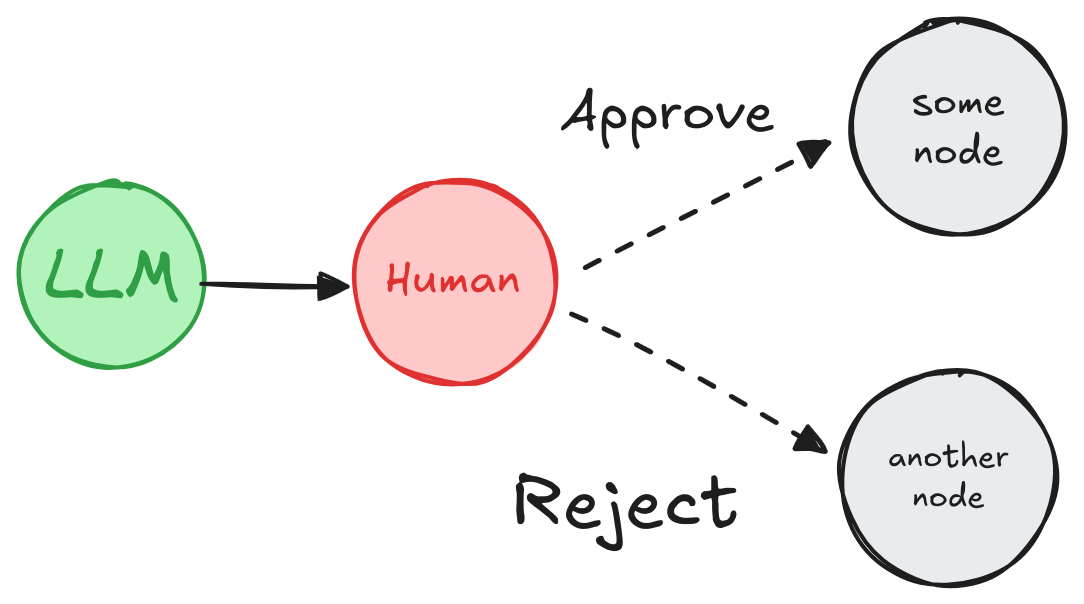

Approve or reject

Pause the graph before a critical step, such as an API call, to review and approve the action. If the action is rejected, you can prevent the graph from executing the step, and potentially take an alternative action.

Pause the graph before a critical step, such as an API call, to review and approve the action. If the action is rejected, you can prevent the graph from executing the step, and potentially take an alternative action.

Extended example: approve or reject with interrupt

Extended example: approve or reject with interrupt

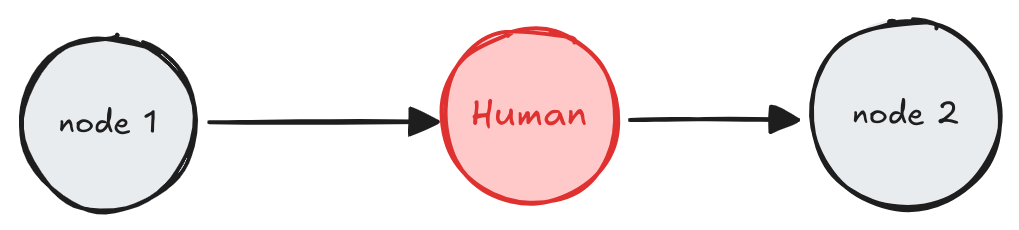

Review and edit state

Extended example: edit state with interrupt

Extended example: edit state with interrupt

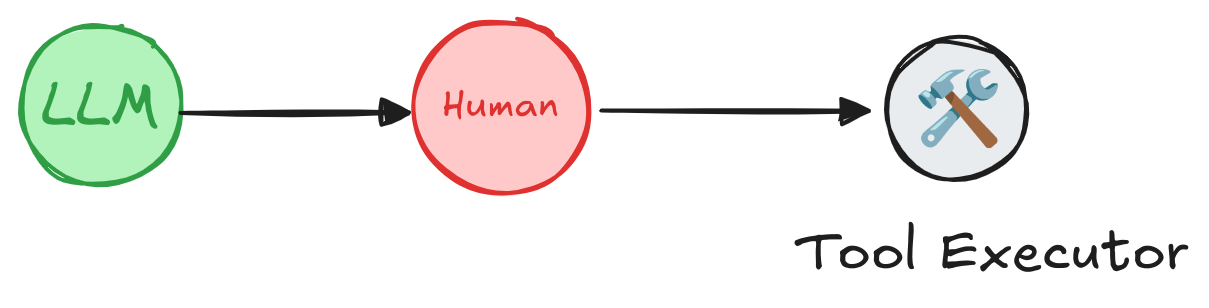

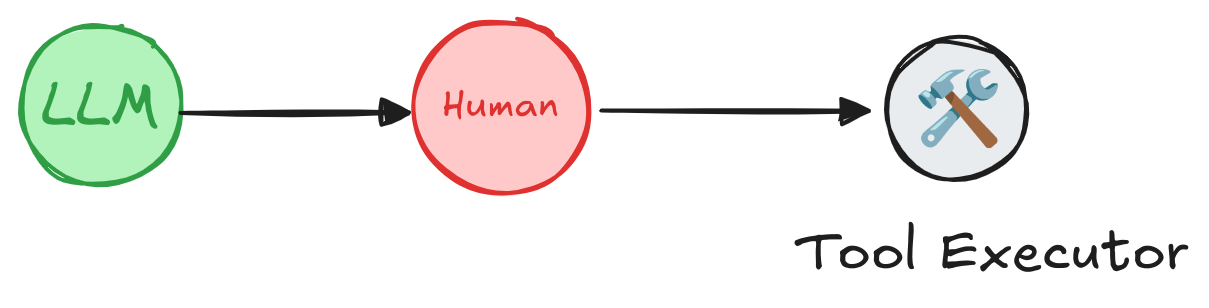

Review tool calls

To add a human approval step to a tool:

To add a human approval step to a tool:

- Use

interrupt()in the tool to pause execution. - Resume with a

Commandto continue based on human input.

- The

interruptfunction pauses the agent graph at a specific node. In this case, we callinterrupt()at the beginning of the tool function, which pauses the graph at the node that executes the tool. The information insideinterrupt()(e.g., tool calls) can be presented to a human, and the graph can be resumed with the user input (tool call approval, edit or feedback). - The

MemorySaveris used to store the agent state at every step in the tool calling loop. This enables short-term memory and human-in-the-loop capabilities. In this example, we useMemorySaverto store the agent state in memory. In a production application, the agent state will be stored in a database. - Initialize the agent with the

checkpointSaver.

stream() method, passing the config object to specify the thread ID. This allows the agent to resume the same conversation on future invocations.

You should see that the agent runs until it reaches the interrupt() call, at which point it pauses and waits for human input.

Resume the agent with a Command to continue based on human input.

- The

interruptfunction is used in conjunction with theCommandobject to resume the graph with a value provided by the human.

Add interrupts to any tool

You can create a wrapper to add interrupts to any tool. The example below provides a reference implementation compatible with Agent Inbox UI and Agent Chat UI.Wrapper that adds human-in-the-loop to any tool

- This wrapper creates a new tool that calls

interrupt()before executing the wrapped tool. interrupt()is using special input and output format that’s expected by Agent Inbox UI: - a list of [HumanInterrupt] objects is sent toAgentInboxrender interrupt information to the end user - resume value is provided byAgentInboxas a list (i.e.,Command({ resume: [...] }))

interrupt() to any tool without having to add it inside the tool:

- The

addHumanInTheLoopwrapper is used to addinterrupt()to the tool. This allows the agent to pause execution and wait for human input before proceeding with the tool call.

You should see that the agent runs until it reaches the interrupt() call,

at which point it pauses and waits for human input.

Resume the agent with a Command to continue based on human input.

Validate human input

If you need to validate the input provided by the human within the graph itself (rather than on the client side), you can achieve this by using multiple interrupt calls within a single node.Extended example: validating user input

Extended example: validating user input

Considerations

When using human-in-the-loop, there are some considerations to keep in mind.Using with code with side-effects

Place code with side effects, such as API calls, after theinterrupt or in a separate node to avoid duplication, as these are re-triggered every time the node is resumed.

Using with subgraphs called as functions

When invoking a subgraph as a function, the parent graph will resume execution from the beginning of the node where the subgraph was invoked where theinterrupt was triggered. Similarly, the subgraph will resume from the beginning of the node where the interrupt() function was called.

Extended example: parent and subgraph execution flow

Extended example: parent and subgraph execution flow

Say we have a parent graph with 3 nodes:Parent Graph: This will print out

node_1 → node_2 (subgraph call) → node_3And the subgraph has 3 nodes, where the second node contains an interrupt:Subgraph: sub_node_1 → sub_node_2 (interrupt) → sub_node_3When resuming the graph, the execution will proceed as follows:- Skip

node_1in the parent graph (already executed, graph state was saved in snapshot). - Re-execute

node_2in the parent graph from the start. - Skip

sub_node_1in the subgraph (already executed, graph state was saved in snapshot). - Re-execute

sub_node_2in the subgraph from the beginning. - Continue with

sub_node_3and subsequent nodes.

Using multiple interrupts in a single node

Using multiple interrupts within a single node can be helpful for patterns like validating human input. However, using multiple interrupts in the same node can lead to unexpected behavior if not handled carefully. When a node contains multiple interrupt calls, LangGraph keeps a list of resume values specific to the task executing the node. Whenever execution resumes, it starts at the beginning of the node. For each interrupt encountered, LangGraph checks if a matching value exists in the task’s resume list. Matching is strictly index-based, so the order of interrupt calls within the node is critical. To avoid issues, refrain from dynamically changing the node’s structure between executions. This includes adding, removing, or reordering interrupt calls, as such changes can result in mismatched indices. These problems often arise from unconventional patterns, such as mutating state viaCommand(resume=..., update=SOME_STATE_MUTATION) or relying on global variables to modify the node’s structure dynamically.