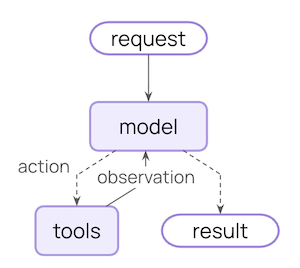

An agent is a model calling tools in a loop until a given task is complete.Documentation Index

Fetch the complete documentation index at: https://docs.langchain.com/llms.txt

Use this file to discover all available pages before exploring further.

Agent = Model + HarnessThe job of a harness: get the model the right context at the right time for the given task.

create_agent is a highly configurable harness. At its simplest:

model=, tools=, system_prompt=. For more advanced capabilities, extend the harness with middleware.

Core components

Model

Pass a model identifier string ("provider:model") or an initialized model instance. See Models for parameters, provider setup, and dynamic model selection.

Tools

Pass any Python callable, LangChain tool, or tool dict. See Tools for tool definition, context access, and dynamic tool selection.System prompt

Shape how the agent approaches tasks. Accepts a string orSystemMessage. For dynamic prompts at runtime, use middleware.

Structured output

Return a validated schema from the agent usingresponse_format=. See Structured output for strategies and examples.

Name

Optional identifier used as the node name when embedding this agent as a subgraph in multi-agent systems.Invocation

You can invoke an agent by passing an update to itsState. All agents include a sequence of messages in their state; to invoke the agent, pass a new message along with a thread_id so the agent can persist and resume conversation history:

Persisting conversation history with

thread_id requires the agent to be configured with a checkpointer. When deployed on LangSmith, a checkpointer is provisioned automatically. Locally, pass one explicitly, for example create_agent(..., checkpointer=InMemorySaver()).context alongside the config. Define the shape of that data with contextSchema and access it through runtime.context:

thread_id scopes the conversation (message history, checkpoints), while context carries per-run data your tools and middleware read at invocation time. Both are commonly passed together. See tool context and Runtime for more.

Streaming

We’ve seen how the agent can be called withinvoke to get a final response. If the agent executes multiple steps, this may take a while. To show intermediate progress, we can stream back messages as they occur.

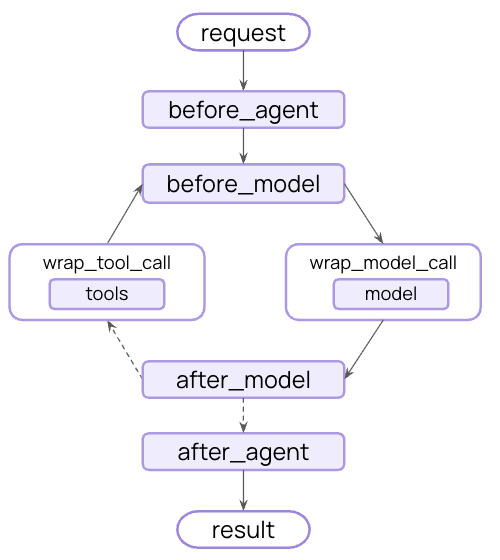

Configure the harness

create_agent is highly extensible. Middleware is the primitive for customization: each piece handles one concern, hooks into the agent loop at the right moment, and composes freely with any other. Take exactly what your use case needs — skip the rest.

Common patterns are pre-built as first-class middleware. Anything custom is one middleware away.

Execution environment

Tools, filesystem, sandboxes, and code execution

Context management

Summarization, memory, skills, and prompt caching

Planning and delegation

Todo lists and subagents for parallel, isolated work

Fault tolerance

Retries, fallbacks, and call limits

Guardrails

PII detection and content controls

Steering

Human-in-the-loop approval before high-impact actions

Execution environment

Agents are useful when they can take action — not just generate text. The execution environment gives the agent a workspace: tools it can call, a filesystem for reading and writing files across turns, and code execution for running scripts or shell commands.FilesystemMiddleware, Sandboxes, Interpreters.

Context management

Every model call has a fixed context window. As an agent runs — accumulating history, tool results, and intermediate steps — that window fills. Summarization compresses history before overflow hits; memory loads persistent instructions at startup so knowledge carries across sessions; skills surface domain knowledge on demand rather than loading everything upfront.SummarizationMiddleware, MemoryMiddleware, SkillsMiddleware, Context engineering.

Planning and delegation

Complex tasks often exceed what one context window can handle. Delegation lets the main agent break work into pieces, hand them to subagents that each run in their own isolated context, and stay focused on coordination rather than execution. Work can run in parallel; the main agent’s context stays clean.SubAgentMiddleware, Subagents.

Fault tolerance

Agents in production encounter failures that rarely appear in development: rate limits, model timeouts, transient API errors. Fault tolerance middleware handles these at the infrastructure level so your tools and business logic don’t need try/catch around every call.ModelRetryMiddleware, ToolRetryMiddleware, Prebuilt middleware.

Guardrails

Some policies can’t live in a prompt — they need to be enforced deterministically regardless of what the model does. Guardrails intercept data as it flows through the agent loop, applying compliance rules or content policies before tool results reach the model’s context.PIIMiddleware, Prebuilt middleware.

Steering

Full autonomy isn’t always appropriate. Steering lets you place humans at specific decision points — before destructive writes, expensive API calls, or anything requiring judgment — without restructuring your agent. The agent pauses and waits; a human approves, edits, or rejects; execution continues.HumanInTheLoopMiddleware, Human-in-the-loop.

Middleware resources:

- Middleware overview: how the middleware stack works and when hooks fire

- Prebuilt middleware: full reference with configuration examples

- Custom middleware: write your own hooks for business logic, PII scrubbing, and more

Connect these docs to Claude, VSCode, and more via MCP for real-time answers.